AWE Architecture Document

Introduction

Audio Weaver is a development platform for audio product developers. It contains a graphical editor (Designer) that is used to configure run-time libraries of audio processing functions (AWECore). This document provides an overall description of Audio Weaver's architecture and is a good starting point for anyone new to the technology. This guide covers general Audio Weaver principles and is applicable to a range of processors from microcontrollers, through DSP's, and all the way to multicore SOCs. These principles apply across processors, but the specific implementation may vary depending on the details of particular systems or implementations.

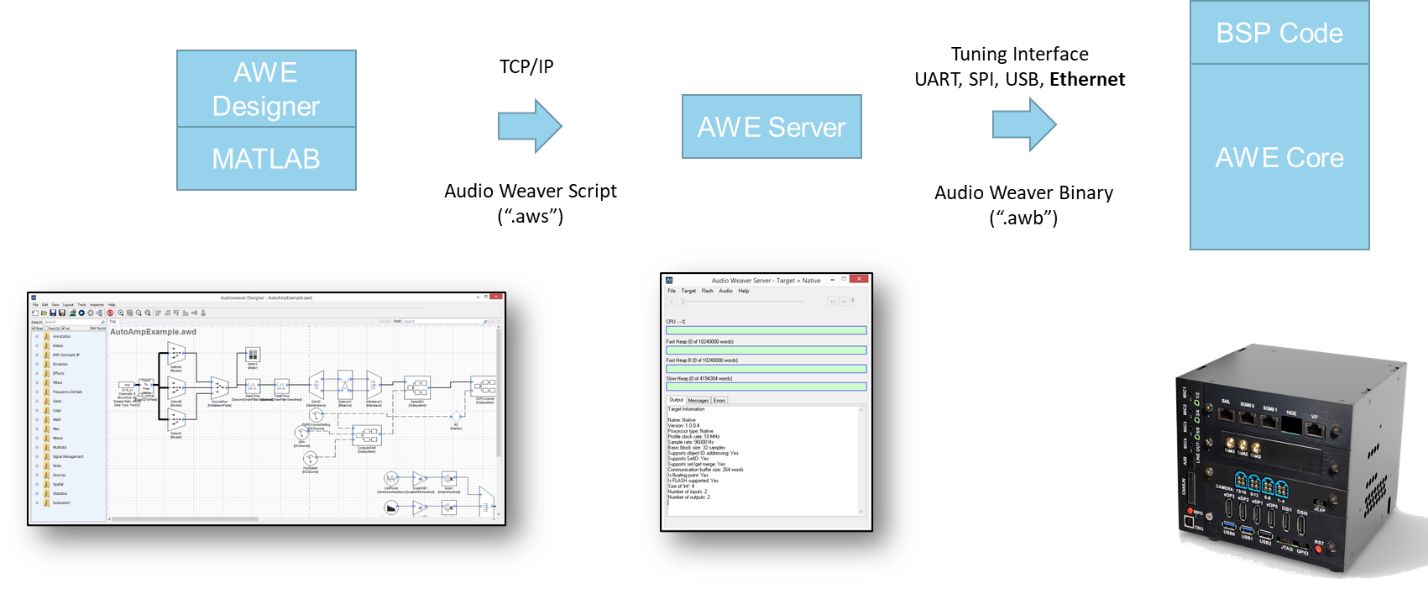

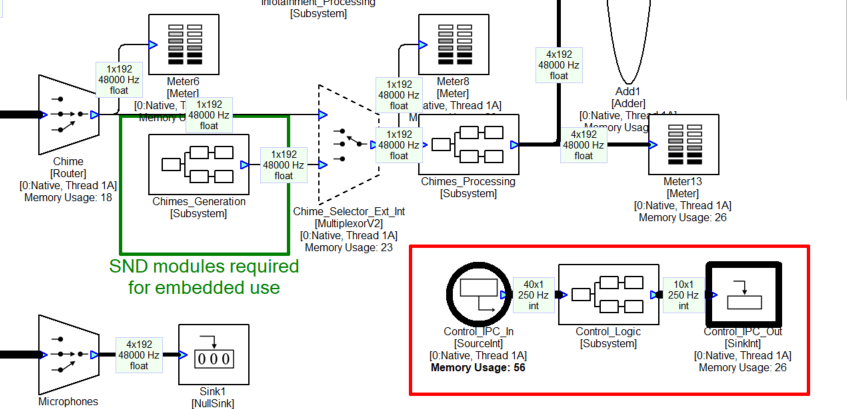

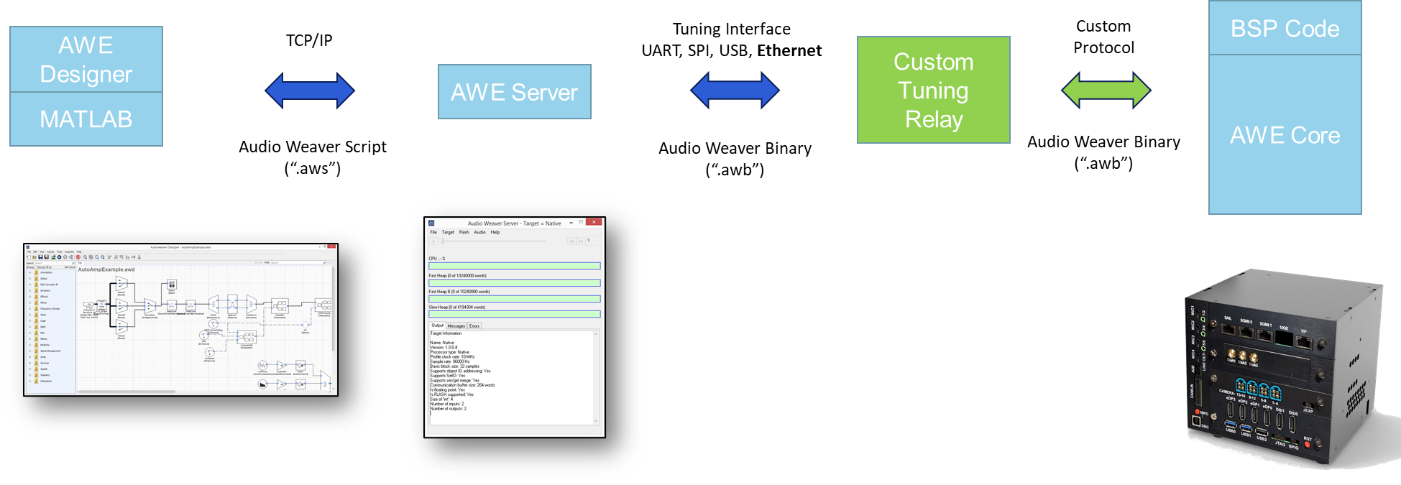

The high-level architecture of Audio Weaver is shown in Figure 1 below. Audio Weaver Designer is the primary interface. Designer is built upon a foundation of MATLAB scripts. MATLAB is used for automation, scripting, and parameter calculations. The "Server" is a Windows program that has 2 functions:

-

Contains an internal Windows run-time target that performs real-time simulations.

-

Manages communication between the PC and the embedded target.

The Server communicates to the target via the "tuning interface". This could be Ethernet, USB, UART, or SPI depending on the peripherals available on the target.

Figure . High-level Audio Weaver architecture showing the various components and how they communicate.

The embedded target contains a board support package (BSP) which manages real-time I/O and the tuning interface. The BSP feeds blocks of audio data to AWECore for processing. The BSP is usually custom written for each target. AWECore libraries are available for the instruction sets shown in Figure 2 and we continue to add new instruction sets to meet market demand. The code is highly optimized and designed for efficient real-time execution.

Arm: Cortex-M4, Cortex-M7, Cortex-M33, Cortex-M55, Cortex-A

Tensilica: HiFi-2, HiFi-3, HiFi-4, HiFi-5, HiFi-Mini

Qualcomm: Hexagon including HVX, Kalimba

Intel and AMD: x86, x64

Analog Devices: SHARC, SHARC+, SHARC-FX

Texas Instruments: C6x, C7x

CEVA: X2

XMOS: xcore-ai

Figure . Instruction sets supported by Audio Weaver

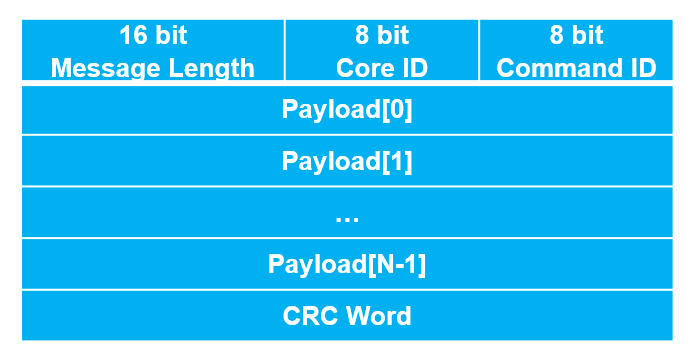

Designer and MATLAB communicate with the Server over TCP/IP. Text messages are sent using Audio Weaver Script (.aws). This is a human readable text format and is like a netlist. The script describes the overall system including modules used, connections, and parameter settings. The Server translates the script to Audio Weaver Binary (.awb) messages. The binary format contains the same information but is easier to decode on the embedded target.

The term "tuning interface" will be used to describe the connection between the Server and the target processor. Note that the tuning interface and protocol are used to build the system, tune parameters in the lab, and also run-time control in a deployed product.

There are 3 versions of the Designer tools available:

Standard -- Provides access to the graphical interface and does not require a MATLAB license. The Standard version is sufficient for most users building, tuning, and deploying systems.

Pro -- Includes access to the MATLAB scripts. Useful for testing and build automation. Requires a MATLAB license[^1].

Pro + Custom Module SDK -- For engineers creating new modules to be used in Audio Weaver. This includes writing C code or wrapping existing libraries. Requires a MATLAB license.

The document begins with an overview of Audio Weaver and the breadth of features. Then we describe the real-time architecture used for audio processing. This includes basic single threaded systems and more sophisticated multithreaded and multicore systems. Then we discuss modules, their underlying architecture, and how they fit into the run-time framework. Next, we discuss how to control processing in products. We give a brief overview of the MATLAB automation API and lastly discuss how Audio Weaver run-time libraries are incorporated into products.

Audio Weaver Basics

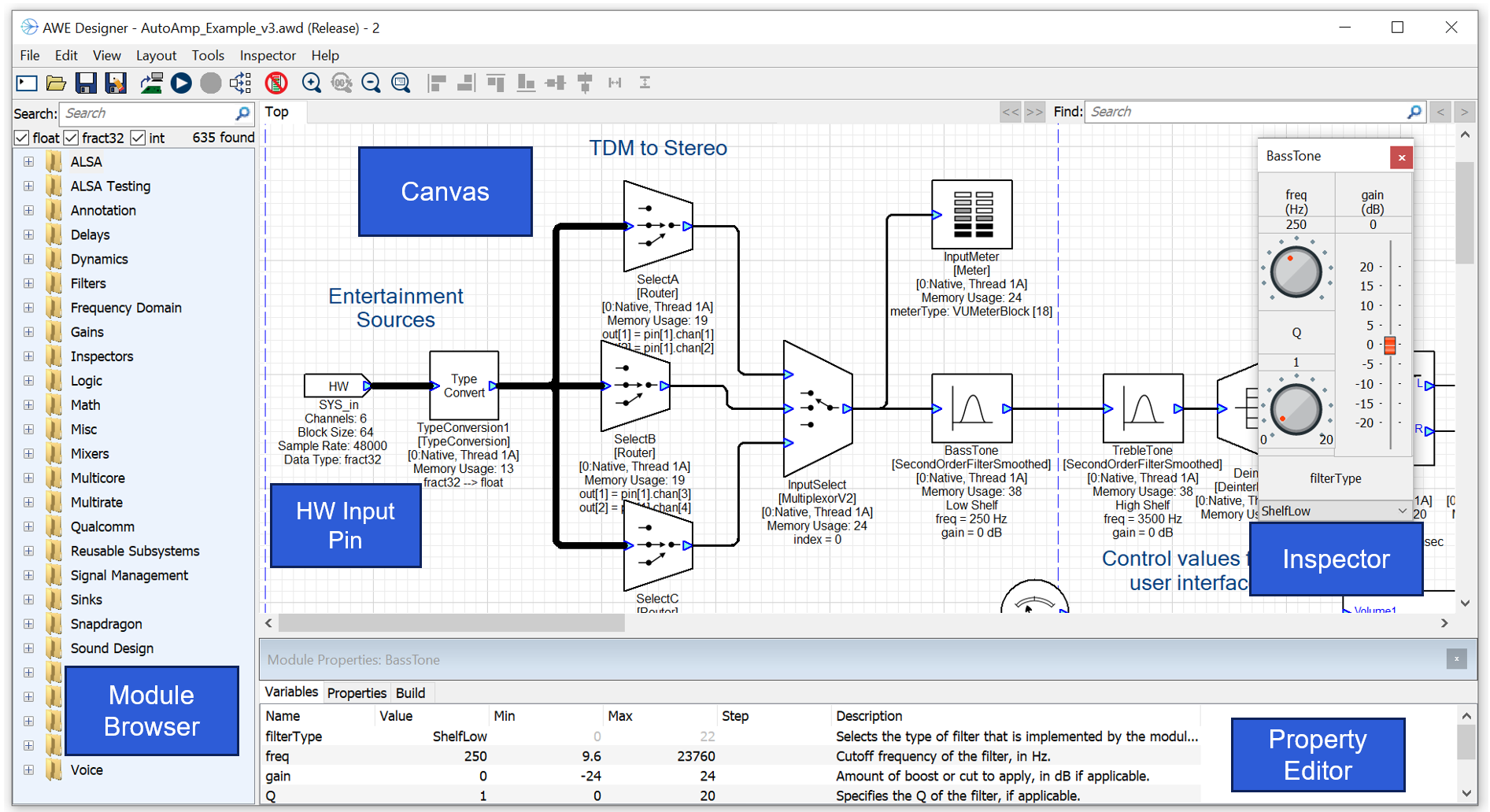

Designer is a PC tool for creating and tuning signal flows. The main window is shown in Figure 3 with several key areas highlighted. The Module Browser is where you find modules and then drag them onto the Canvas. You then use Inspectors and the Property Editor to change parameters.

Figure Main Audio Weaver Designer window.

Designer

Hardware Input and Output Pins

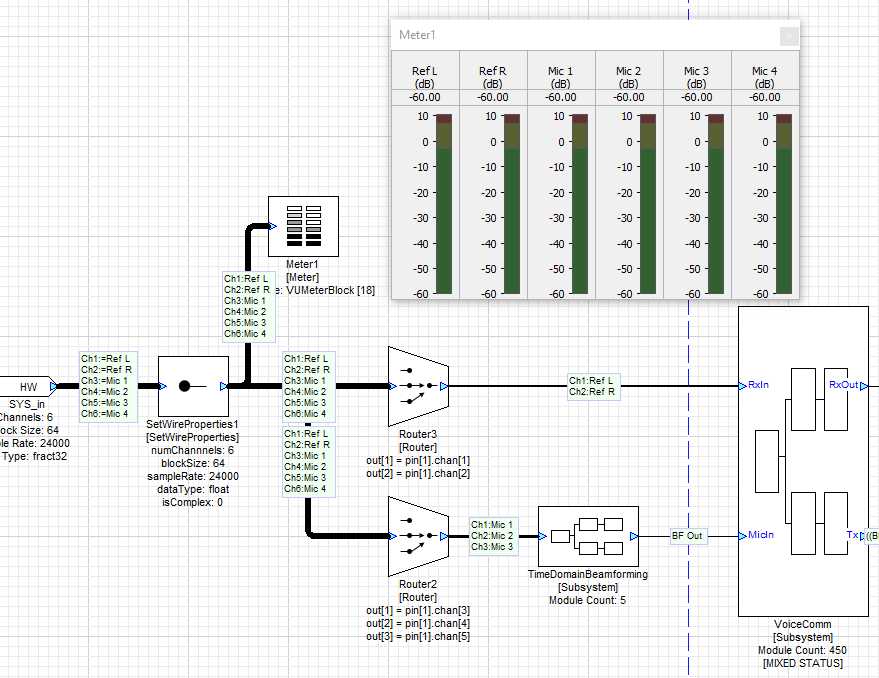

Audio arrives into the system on the HW Input Pin. In the example shown above, this is 6 channels of audio at 48 kHz with a block size of 64 samples. The audio arrives as fixed-point data (fract32) and is converted to floating-point by the TypeConvert module. Audio Weaver currently supports one HW Input Pin and one HW Output Pin, and all I/O is funneled through these interfaces. The Designer GUI allows you to configure properties of the HW Input Pin while the properties of the HW Output Pin are inherited from the upstream modules.

Modules and Wires

The signal flow is composed of Audio Modules and the connections are called Wires. Each wire corresponds to a buffer of data in memory, and these buffers are reused when no longer needed. This reduces the total memory needed for a system. Audio Weaver stores audio samples in an interleaved format and modules are designed to operate on interleaved data.

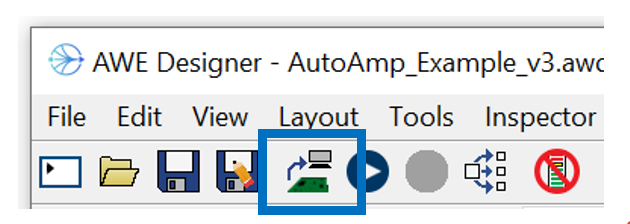

Design and Tuning Modes

When you first launch Designer, it is in Design Mode. Here you can update the signal flow, add and delete modules, and change wire information. When you are ready to listen, hit the Build button on the toolbar. The system is built, real-time audio starts, and Designer enters Tuning Mode. In tuning mode, parameter changes are sent to the target and you can hear the changes instantly. Tuning mode also supports profiling, and visualization of signals (Meters and Sink inspectors).

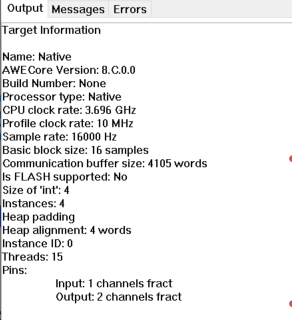

Native PC Simulation

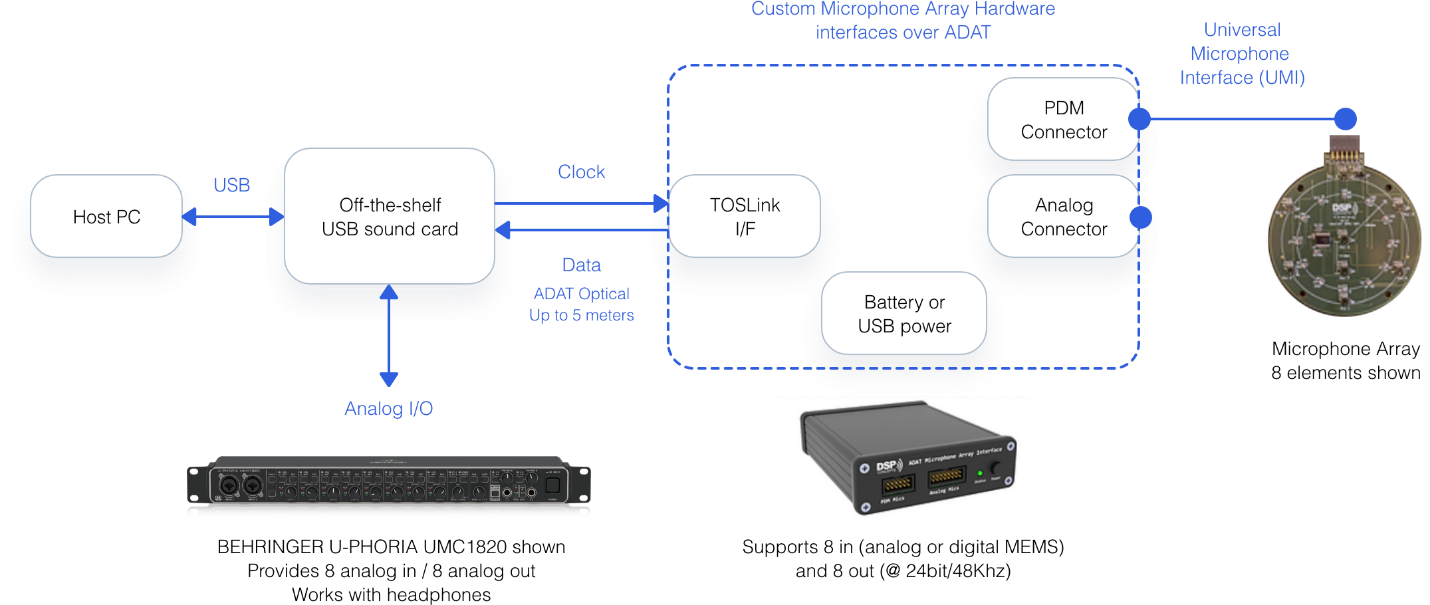

Audio Weaver supports real-time audio processing on the PC. This is called the Native PC Target and allows development to happen independent of target hardware. The PC makes an ideal development environment with a very powerful processor, large memory resources, and a variety of audio devices. Audio Weaver supports real-time audio through DirectX or ASIO I/O. Many customers leverage off-the-shelf sound cards for these types of simulations. DSP Concepts also separately sells rapid prototyping hardware which combines line level I/O with microphones. All I/O is synchronized allowing echo cancelers and other voice processing to run smoothly. The DSP Concepts RAPD Kit is shown in Figure 4.

Figure . DSP Concepts RAPD Kit provides synchronized audio I/O for Native PC simulation.

80 to 90% of Audio Weaver development happens on the PC independent of target hardware. In Native PC Mode, you can create and tune signal flows, integrate new modules, perform A/B listening tests, and develop regression tests. Running on target hardware is used to verify resource consumption and to interface to the actual peripherals used.

Attaching to a Running Target

Audio Weaver is also able to attach to a running system without disturbing the audio processing. This is similar to attaching a debugger to a running processor. It allows you to examine system state without reloading and restarting the system. Refer to Attach to Running Target in the Audio Weaver Designer User's Guide for more information on this feature.

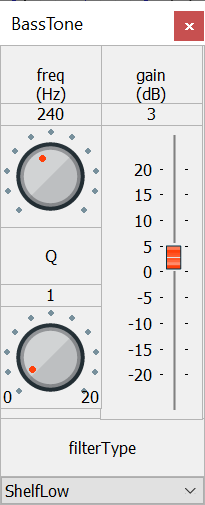

Inspectors

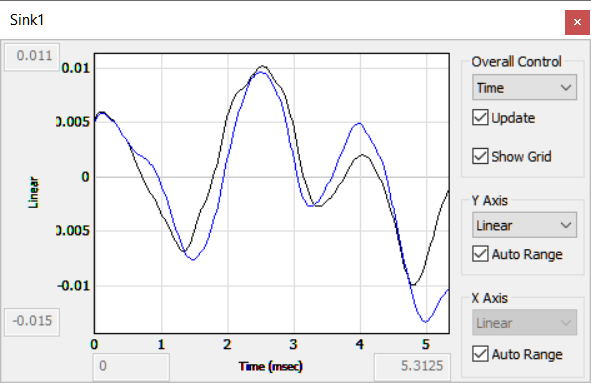

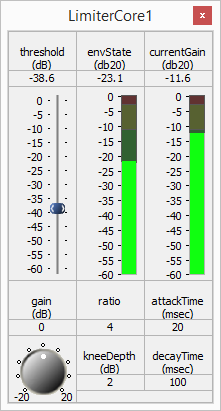

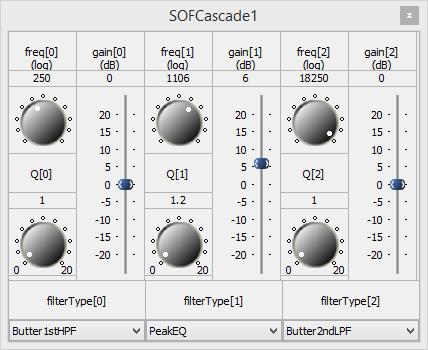

Examples of inspectors are shown in Figure 5. Audio Weaver provides a base set of GUI widgets (knobs, sliders, drop lists, meters, etc.) that you can use when developing custom inspectors. Inspectors are available in both Design and Tuning Modes.

Figure . Examples of some inspectors that are available in Audio Weaver.

Hierarchy

Audio Weaver supports hierarchy via the subsystem module. This allows you to organize your signal flow by placing specific functions into their own subsystems. Audio Weaver supports arbitrary levels of nesting and you can places subsystems, within subsystems, and so forth. You can also visually create custom subsystem inspectors using the Inspector Designer, and then use this to tune your subsystem. It is easy to create reusable subsystems and share them with your team members.

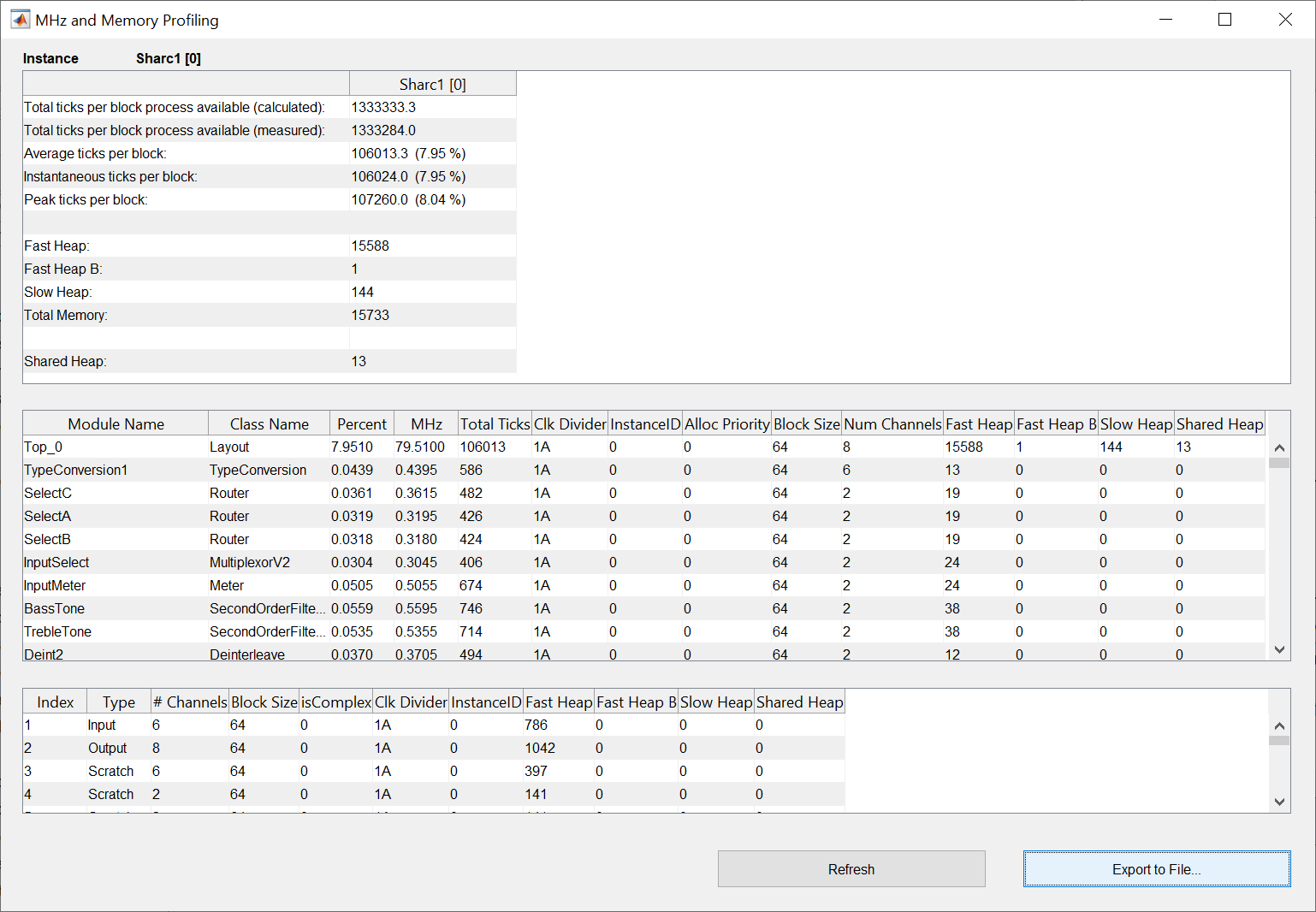

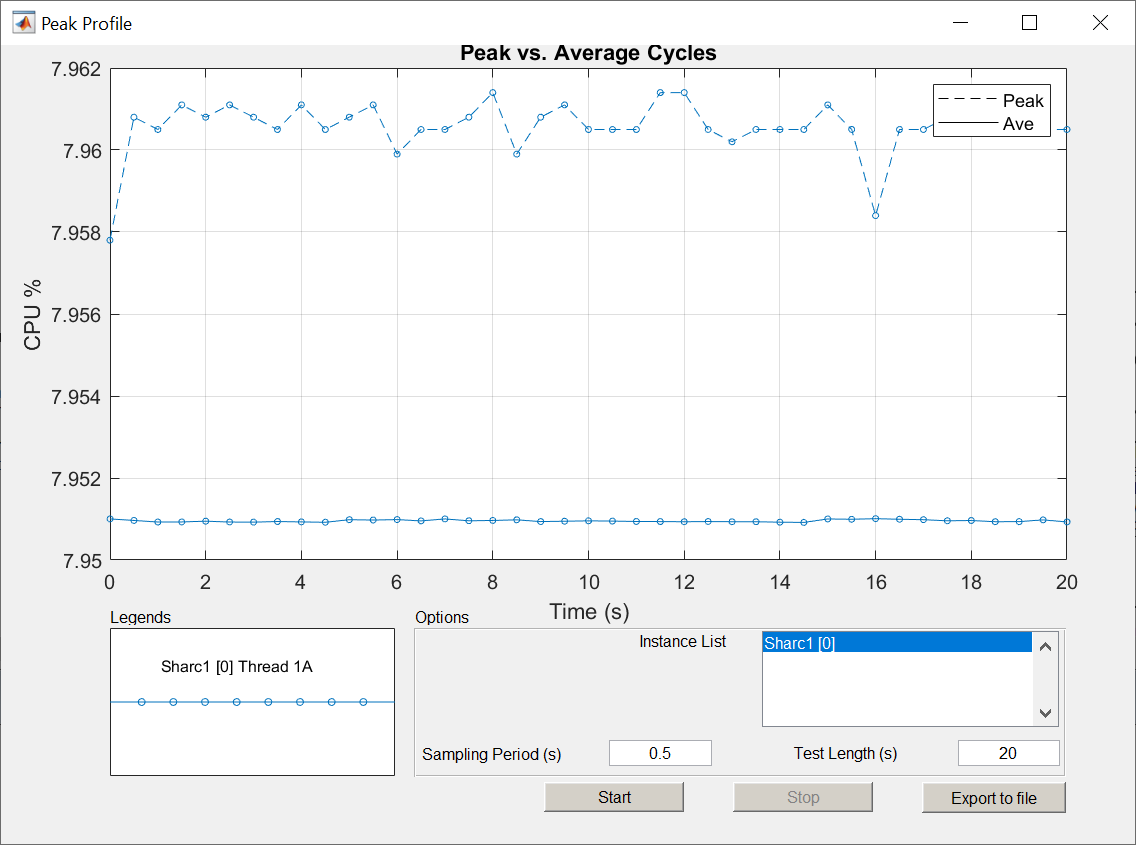

Profiling Resource Consumption

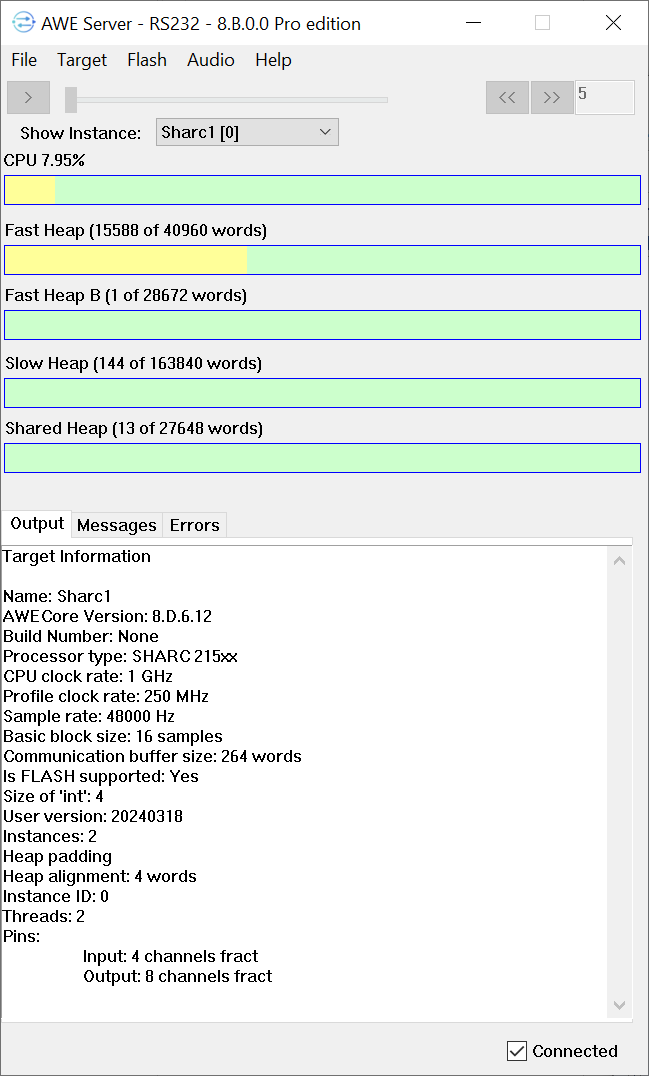

Audio Weaver provides multiple methods of profiling your design to determine resource consumption. These are illustrated in Figure 6. By default, the Server window shows aggregate information when in Tuning mode. Designer can go a step further and provide detailed module-by-module information. And finally, if you are concerned about variations in CPU load, you can use the Peak Profiling feature to identify issues.

(A) Aggregate profiling shown on the Server.

(B) Per module profiling.

(C) Peak profiling.

Figure . Multiple methods of profiling in Audio Weaver. (a) Aggregate information on the Server window. (b) Detailed per module information. (c) Peak profiling showing variation over time.

Advanced Signal Flow Design

Audio Weaver has a wire buffer format which provides a high degree of design flexibility. Each wire buffer locally encodes the number of channels, block size, and sample rate. With this you can:

-

Define multichannel signals holding from 1 to 1023 channels. Processing 5.1, 7.1, or 7.1.4 signals is straightforward.

-

Mix processing at different sample rates. For example, playback processing can be run at 48 kHz while voice processing is at 16 kHz.

-

Control rate processing can be done at a decimated rate to reduce CPU consumption.

-

Multithreading allows you to mix block sizes and execute processing at different thread priorities.

-

Multicore processing allows you to seamlessly partition processing across multiple cores in an SOC.

You can use these features to optimize your signal flow and reduce the CPU load. In general,

-

Larger block sizes are more efficient than smaller block sizes.

-

Using an N-channel representation is better than processing N mono channels.

-

Processing at a lower sample rate, or decimated rate, is more efficient.

Your signal flow can also incorporate feedback. Feedback occurs on a block basis and is useful, for example, when generating reference signals for an echo canceller, or designing reverb effects.

Section 3 provides more details on Audio Weaver's real-time architecture.

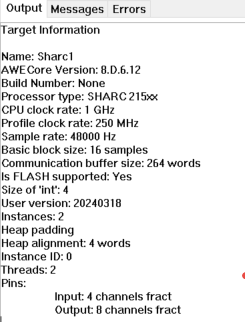

Target Information

Information about the currently connected target is shown on the Server window as illustrated in Figure 7. You can switch between targets using the "TargetChange Connection" menu of the Server.

Figure . Target information shown on the Server. The window on the left corresponds to Native Mode when the audio processing is performed on the PC. The right side is when connected to a SHARC 21594 EZ-KIT.

File Formats

Audio Weaver stores your signal flow using two different file formats:

.awd -- binary format used natively by MATLAB

.awj -- json text format

You select which format to use when saving a design. We recommend that you use the AWJ format because the files are smaller, compatible with source code repositories, and easier to compare.

Advanced Tools

Audio Weaver has additional tools which are useful in the product development process. We touch briefly on them here and more details can be found at the online Documentation Hub.

Batch File Processing

In Native mode, you can quickly process WAV files through your design. The processing is faster than real-time and is useful for batch processing of large datasets. You access this through the Designer "ToolsProcess Files" menu item.

Tuning Interface Test

This is useful for software developers integrating the AWECore libraries on new hardware. The regression test is accessed through the Designer "ToolsTuning Interface Tests" menu item. The tests measure the robustness and speed of the tuning interface.

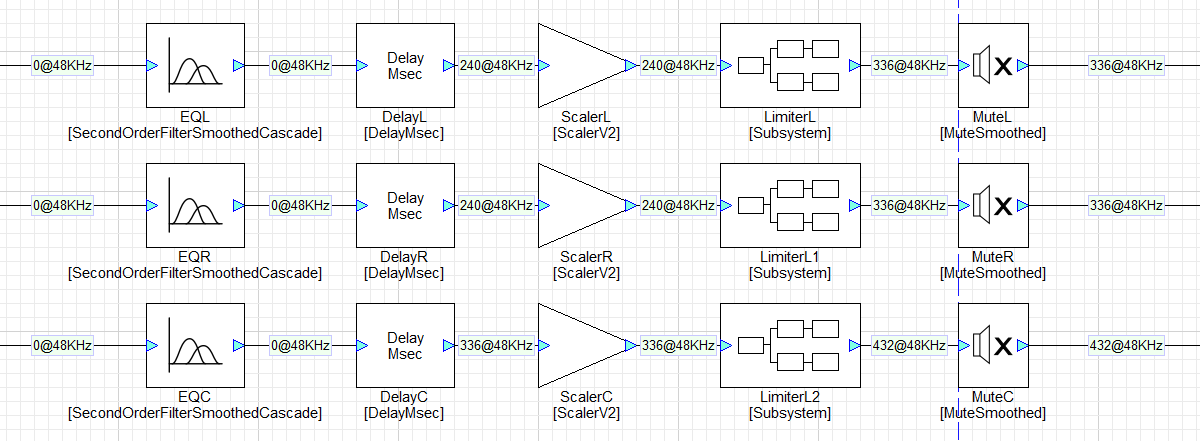

Computing Frequency Responses

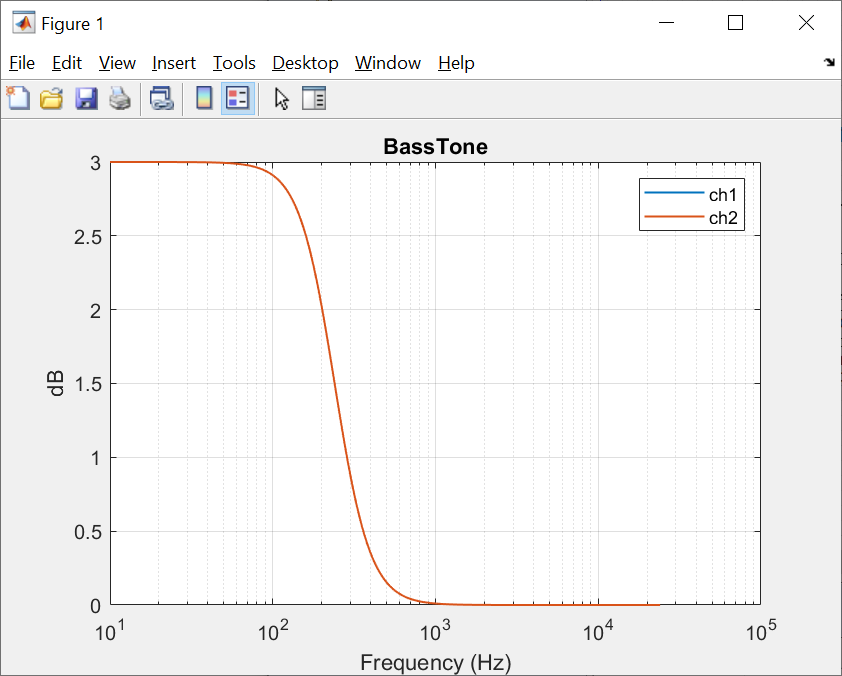

If your module is linear and time invariant (i.e, a "filter") then Designer is able to compute its frequency response. Right-click on the module in the canvas and select "Plot Frequency Response". An example is shown below.

Figure . Frequency response for a second order filter configured as a low-shelf.

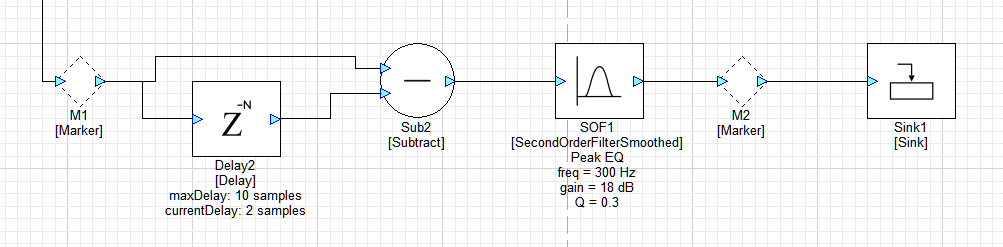

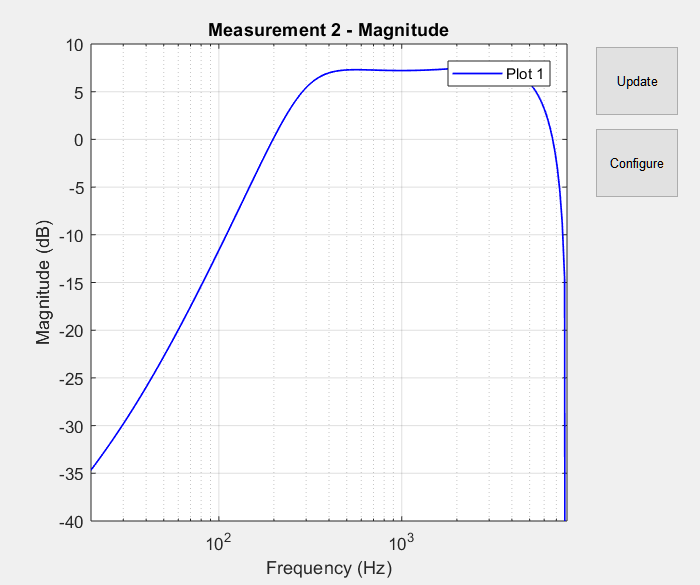

Designer is able to compute the frequency response between two locations in the signal flow. Add Marker Modules to the wires you want to measure between, and then use the "ToolsMeasure Frequency Response" feature of Designer. An example is shown below.

Figure . Point to point frequency response measurements are made between two Marker modules in the Designer Canvas. In this example, the measurement is made between Markers M1 and M2.

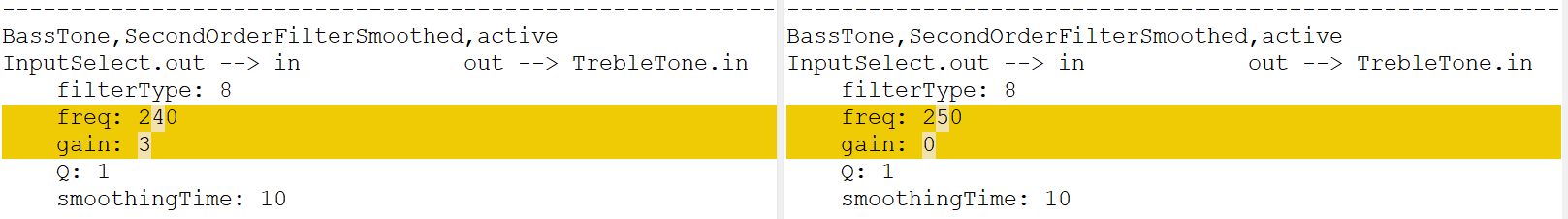

Diffing Systems

Audio Weaver contains tools for comparing two systems. The "ToolsShow Unsaved Changes" menu item displays what changes were made since the file was opened. This is useful to see what variables were updated during a tuning session. You can also compare two different designs using the "ToolsCompare Layouts" feature. In both cases, Audio Weaver generates a text "list file" containing parameter values and wiring information and then uses an external diffing tool to show what changed. An example is shown below.

Figure . Example showing the text list format file and how changes can be found using standard diffing tools.

Debug and Release Builds

Audio Weaver has a feature which allows you to selectively include / exclude modules. Modules in the signal flow can be marked as being "Debug" or "Release". Release is the default when you first drag a module to the canvas. You can then configure Designer to do a "Release" or "Debug" build. In a Debug build, all modules in the design are included. In a Release build, all modules marked as Debug are removed. In practice, you may add extra Meter and Sink modules to your design for debugging and then exclude these from the Release build.

Channel Names

Audio Weaver allows you to add names to the channels in your design. To get started, right-click on a wire and select "Edit Channel Names". Name information is then propagated throughout and displayed on level meters and multichannel controls. An example is shown below. For more information, search for "Channel Names" on the Documentation Hub.

Figure . Example of channel names used in the Designer GUI.

Channel Latency

In many audio applications, it is important to maintain alignment of audio channels throughout the signal flow. Audio Weaver can be setup to track and report latency. The feature is similar to channel names and an example is shown below.

Figure . Example showing latency tracking in the Designer GUI.

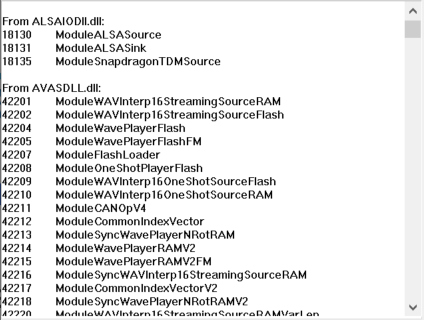

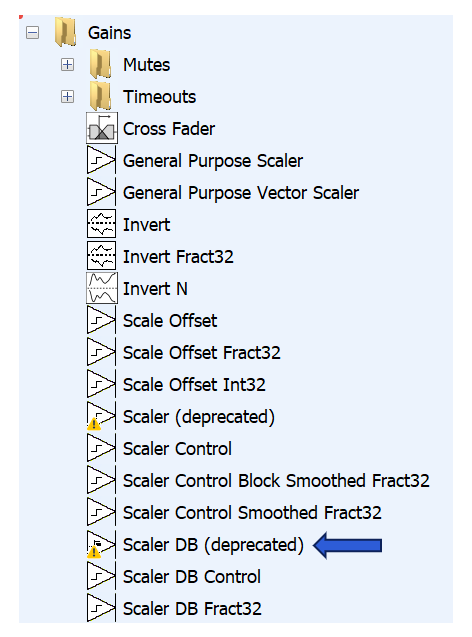

Module Libraries

Audio Modules are organized into Module Libraries in Audio Weaver. Each module library is contained in its own Windows DLL, and the DLL is loaded when the Server launches[^2]. You can list all available modules using the "TargetList Modules" menu of the Server. An example is shown in Figure 13. Audio Weaver has 100's of modules available, sufficient for most audio use cases.

Figure . The Server can show what modules are available for you to use. The modules are grouped into DLLs and this is only a partial list of the first two libraries.

In total, Audio Weaver contains over 500 different processing modules organized into separate module libraries.

Standard -- Time domain playback processing

Advanced -- Multirate and frequency domain processing. Also multichannel versions of some modules.

Voice -- Echo cancelers, beamformers, and noise reduction for voice applications

Sound Synthesis -- PCM generators for chimes, AVAS, and sound design applications

Machine Learning -- ML run-time interpreters (TFLM and ONNX). Feature extraction.

These modules are sufficient for most playback and voice processing use cases and many customers are able to develop their products using the supplied modules. More details are found on the Documentation Hub in the Section entitled Module Users Guide.

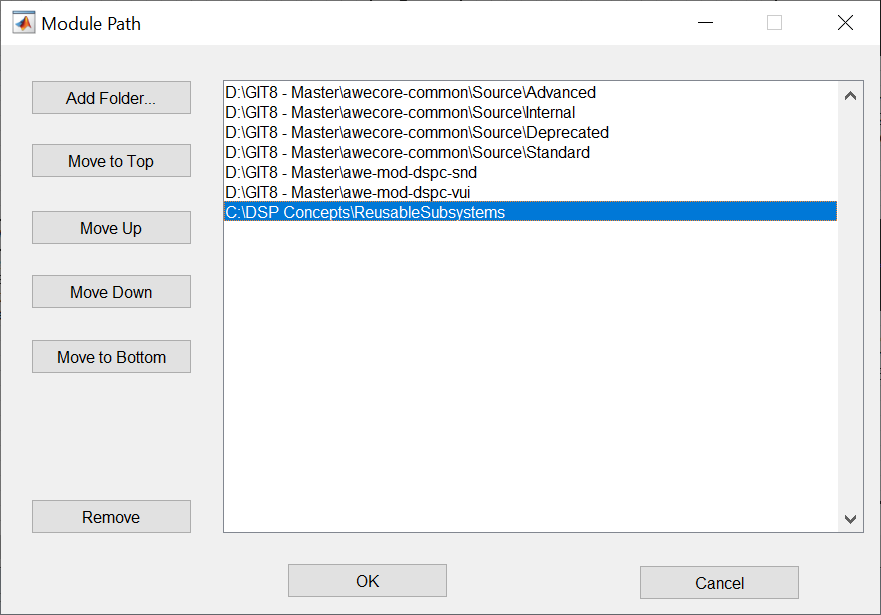

You can add or remove module libraries in Designer via the "FileSet Module Path" menu. This opens the dialog shown below. If you are developing custom modules, you must add your custom libraries here. When you close this window, Designer rescans all module libraries and updates the Module Browser.

Figure . Window to configure the module search path.

Custom Module API

You can also add custom modules through our Custom Module API. This is for advanced users and IP partners who want to leverage existing audio processing functions within the Audio Weaver execution environment. Modules are developed using a combination of MATLAB code and C code. The Audio Weaver Designer includes a Custom Module Wizard which simplifies the overall development process.

Only the module writer needs access to a MATLAB license. Once a module is complete, it can be delivered as an Interpreted Module that is compatible with the Standard Edition of Audio Weaver. The users of the custom module do not require MATLAB licenses.

If you develop custom modules, we recommend that you start on Windows and debug your module in Native mode. This is the friendliest development environment and easiest to debug. Once your module is working in Native mode, and you have a regression test, then migrate to an embedded target. Recompile your code and verify that it is working. Then profile to check the resource consumption and see if it requires optimization. We recommend keeping the Native and target implementations in sync (same sound quality) so that Native mode can continue to be used for prototyping, tuning, and testing.

Open IP

DSP Concepts provides access to signal flows implementing a variety of useful audio features. These signal flows are provided as open Audio Weaver designs allowing you to understand, modify, and tune the IP yourself. We encourage customers to build upon our designs and make improvements for their unique requirements. The designs are found on the Documentation Hub and include:

Presence Detection -- ultrasonic motion-activated on/off control

Direction of Arrival -- Accurate estimation of audio source direction using 2+ microphones.

Bone Conduction Fusion -- For earbuds. Combines a standard microphone with an accelerometer to clean up voice.

PlayWide -- Stereo image enhancement. Widens the perceived sound field of stereo playback systems.

PlayBass -- Bass enhancement. Extends perceived bass response by adding harmonics based on low frequency fundamentals.

PlayLevel -- Volume management. Maintains output at a target loudness level despite varying input levels.

PlayVoice -- Dialog boost. Emphasizes the voice band range while attenuating non-voice band signals.

Room Correction -- Automatically applies low frequency EQ to adjust for room acoustics. Compensates for device placement.

Wind Noise Reduction -- Suppresses characteristic noise in specific frequency bands. Requires 2 or more microphones.

Dynamic Instantiation

When a system is built in Audio Weaver, the Designer tool sends a list of commands to the target to instantiate, configure, and connect modules. This set of commands is similar to a netlist and allows you to reconfigure the processing without having to rebuild code. This leads to an agile workflow and avoids having to generate code and rebuild the target image. We refer to this as Dynamic Instantiation.

MATLAB Automation API

Audio Weaver is tightly integrated with MATLAB and you can use MATLAB scripts for general automation, regression testing, and creating custom inspector control panels. Refer to the MATLAB Automation API in Section 6

Flash File System

Some deeply embedded processors, like microcontrollers or DSPs do not have an operating system with a file system. As an alternative, Audio Weaver includes an optional Flash File System which provides basic functionality. The Flash File System is used to store AWB files which are loaded at initialization or WAV file samples for sound generation modules. Modules leveraging this feature include "FFS" in their module class name.

Configuring Audio Weaver

The underlying functionality of Audio Weaver can be configured in several different ways.

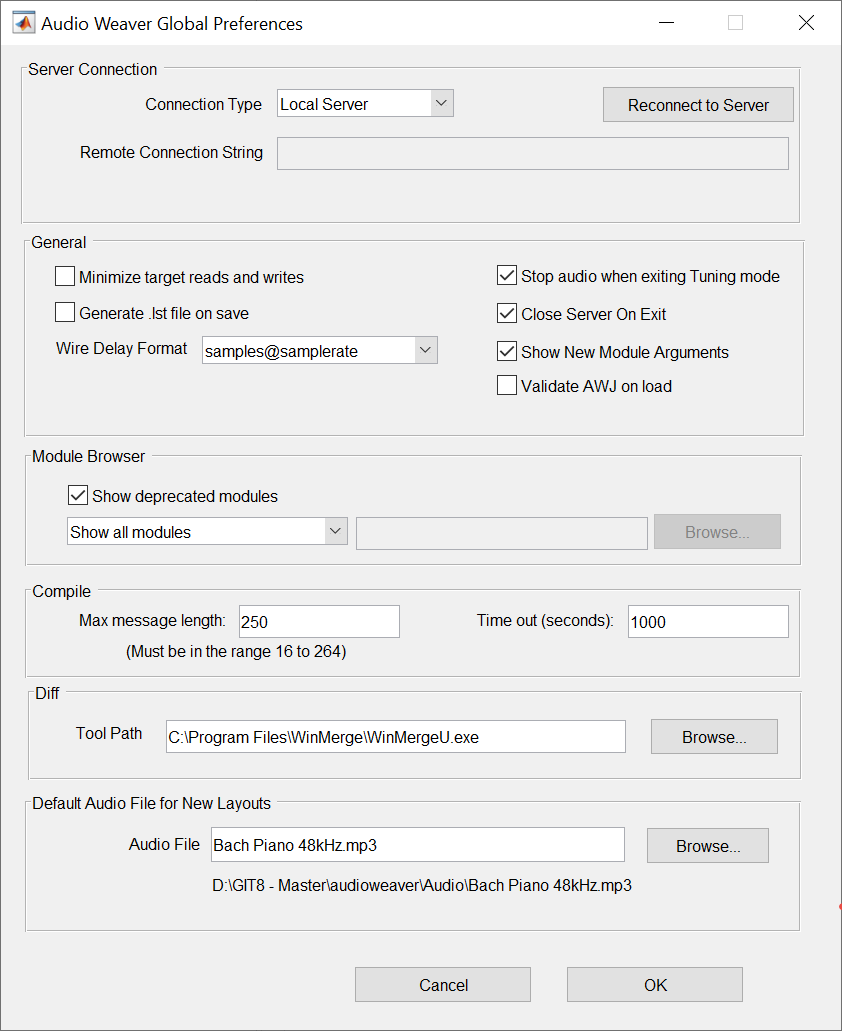

Global Preferences

Global Designer settings are accessed file the "FileGlobal Preferences" menu. Some things you can change here are:

-

Configure a remote tuning proxy

-

Change general Designer attributes like what format to use when displaying delay information

-

Configure the module browser

-

Set a diff tool

-

Change the default audio file used in Native mode

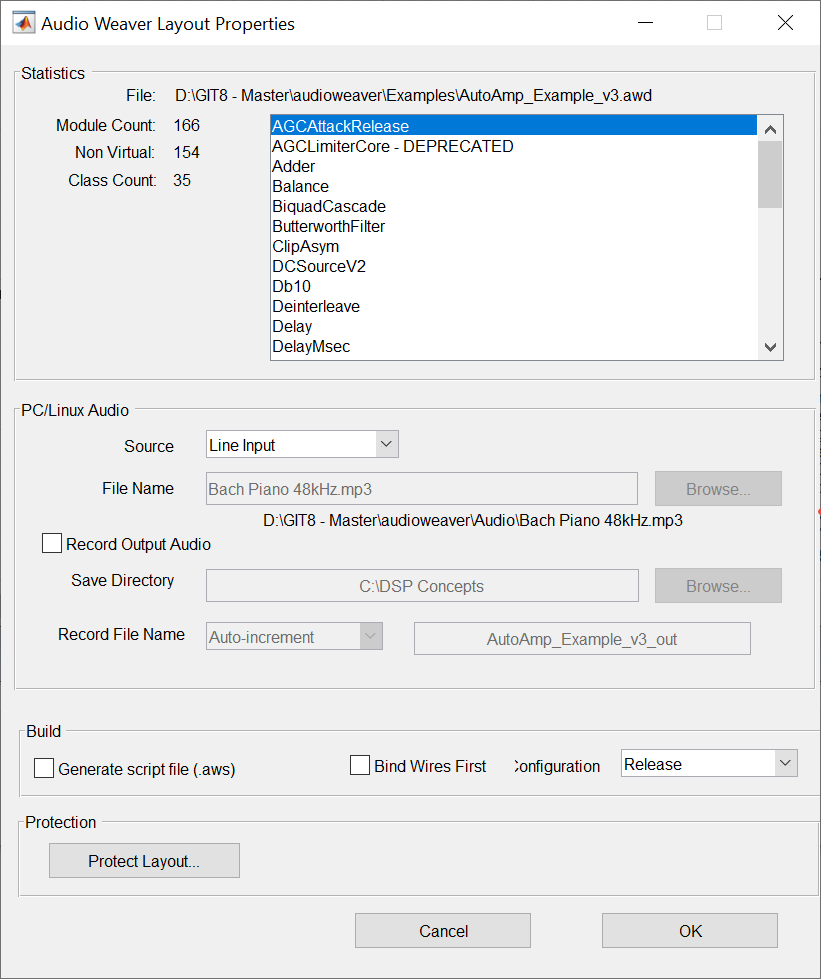

Layout Settings

You can configure settings which apply only to a particular signal flow on the "LayoutLayout Properties" menu item. Here you can:

-

See which module classes are used in your design, and the total module count.

-

Switch between sound card and file input.

-

Enable recording of the hardware output pin in Native mode.

-

Switch between Release and Debug builds.

Figure . Designer's Global Preferences and Layout Properties dialog boxes.

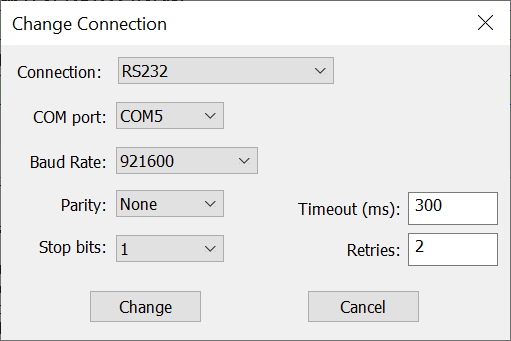

Server Settings

The Server has two configuration windows which are frequently used.

Changing the Connected Target

The "TargetChange Connection" menu allows you to change the tuning interface and configure settings.

Figure Changing the tuning interface through the Server. Here are the settings for an RS232 connection.

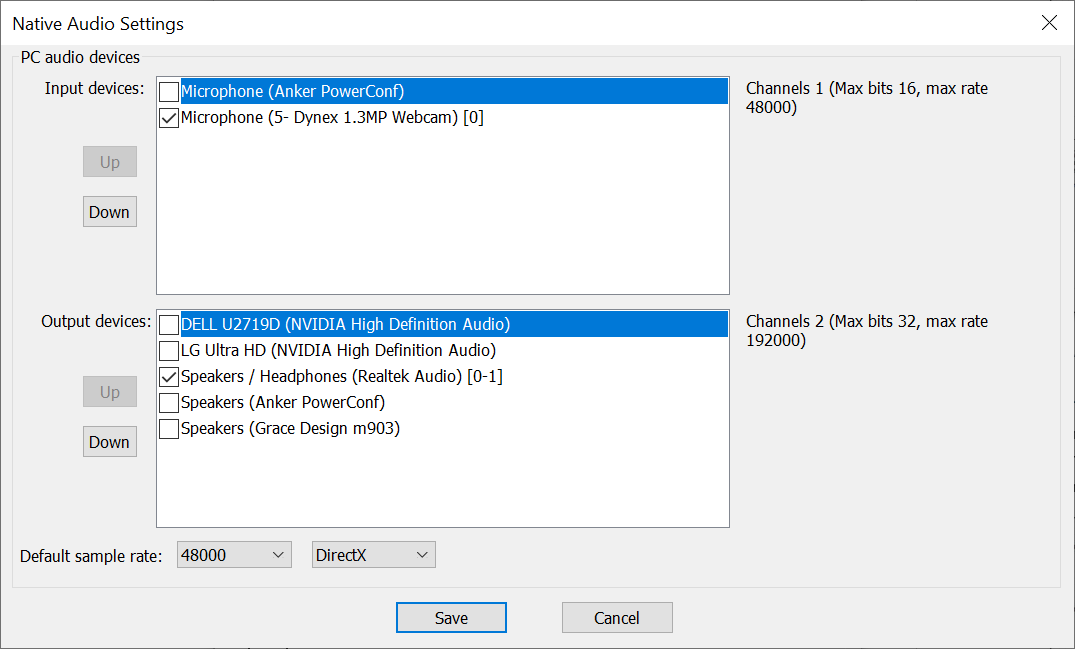

PC Sound Card Settings

You change PC sound card settings in the "FileNative Audio Settings" dialog. You can select multiple devices and switch between DirectX and ASIO drivers. The dialog will show audio devices currently available in your system.

Figure . Configuring audio device using in Native mode through the Server.

Server INI File

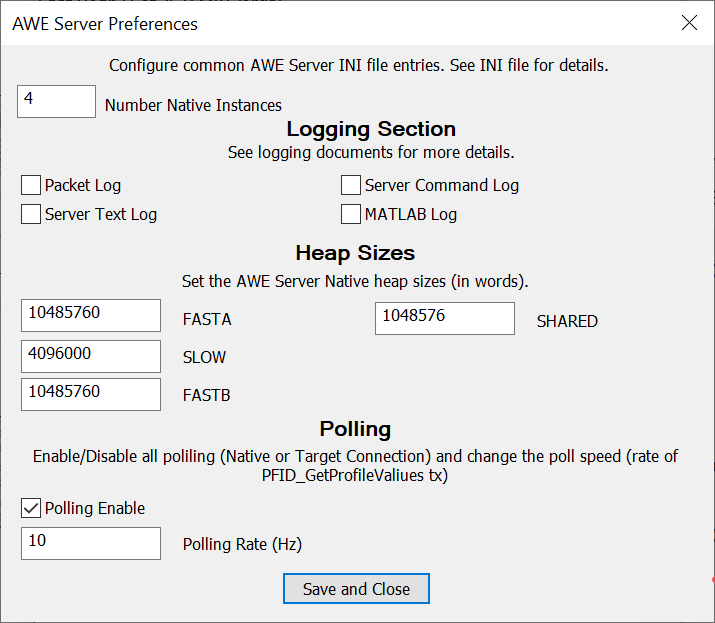

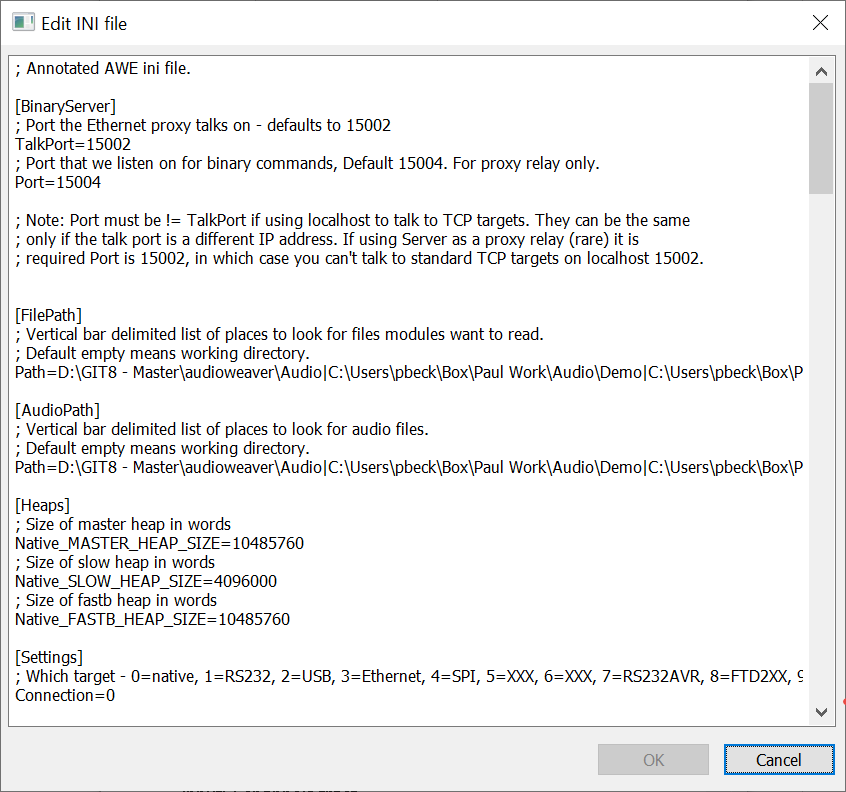

The Server stores a larger number of configuration settings in an INI file. The most frequently used INI settings apply to the Native PC target. For easy access, you can get to these directly using the "FilePreferences" window. To access all INI settings, use the "HelpOpen INI File" menu item. This is a text format and uses a standard Key=Value approach. Search the Documentation Hub for "INI File" to learn more.

Figure . The Server's Preferences windows allows you to configure a subset of the INI file settings.

Figure . Partial listing of the Server's INI file settings.

File Search Path

Audio Weaver sometimes needs to access files stored locally on your PC. Instead of using absolute paths (which make it difficult to share designs with others), you can use the File Seach Path feature. You configure this via the "FileSet File Search Path" dialog. In your design, instead of absolute paths, you store only the base file name. Designer then locates the actual file on disk by searching -- in order - through the File Search Path. This feature is mainly used in two places:

-

When specifying an audio file to use in Native mode (Layout Properties dialog).

-

When modules need to access files on disk. Examples are wake word model files or machine learning model files.

Logging Support

You can log data in several different ways in Audio Weaver. The most basic is to record the hardware output pin when in Native PC mode. You configure this on the Layout Properties dialog discussed in Section 2.8.2.

You can also inject and record audio signals inside your signal flow using the WavFileSource and WaveFileSink modules. This feature is available on Native module and on targets that have a file system (like Linux or Android).

The Audio Weaver Server also has built-in logging of messages. This is useful when debugging tuning interfaces or remote tuning proxies. The Server logging information is shown at the top of Figure 18.

Real-Time Audio

Audio Weaver performs block-based audio processing. For each module in the design, the framework calls each module's processing function. For most systems, the larger the block size, the more efficient the processing becomes[^3]. The block size of the system is determined based on what algorithms are running and how much memory is available. A low latency application, like automotive road noise cancellation, might run at a block size of ½ msec. Telephony applications are still latency sensitive, and might use block sizes in the range of 4 msec to 8 msec. Playback applications are less latency sensitive and could use even larger block sizes.

Systems that operate using one block size are single threaded. This is the most basic real-time system. If a system requires multiple block sizes, then multithreading is required. We describe each type in turn.

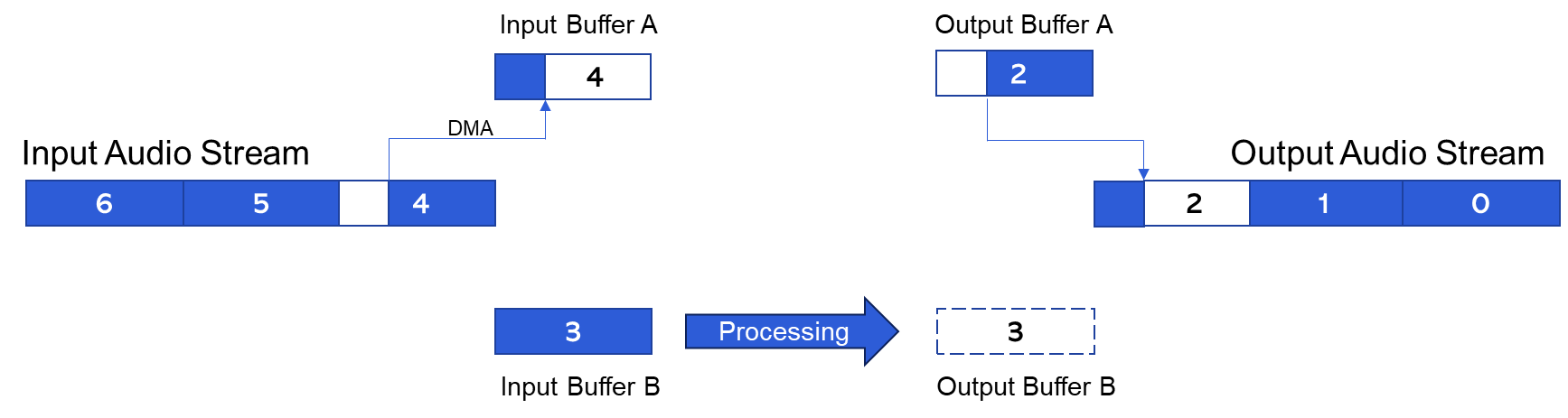

Single Threaded Processing

Consider the system shown in Figure 20 which operates at a 1 msec block size. This corresponds to 48 samples at 48 kHz. The underlying BSP code will be designed to buffer up 1 msec blocks of audio data, generate an interrupt, and then call the Audio Weaver processing. Real-time audio I/O is usually performed with DMA and double buffering as shown in the figure. The DMA fills (or empties) buffer A while buffer B is used for processing. Every 1 msec the buffers alternate.

Figure . Single threaded system operating with ping/pong buffers A and B. The numbers correspond to the index of the 1 msec blocks of audio data in the system.

In the system above, you can see that block 4 is filling the input DMA buffer while block 2 is streaming out. The net latency through the system is exactly 2 blocks and this is the lowest latency that can be achieved for a single threaded system. The latency depends on the block size with smaller blocks yielding lower latency.

Basic Block Size

In Audio Weaver, the block size of the DMA operation is called the "basic block size" of the BSP. When the Server attaches to a target, it queries the target and displays information on the Output window. An example for a SHARC 21594 EZKIT target is shown in Figure 7. The basic block size is 16 samples and the sample rate is 48 kHz. The audio processing thus happens every 1/3 of a millisecond.

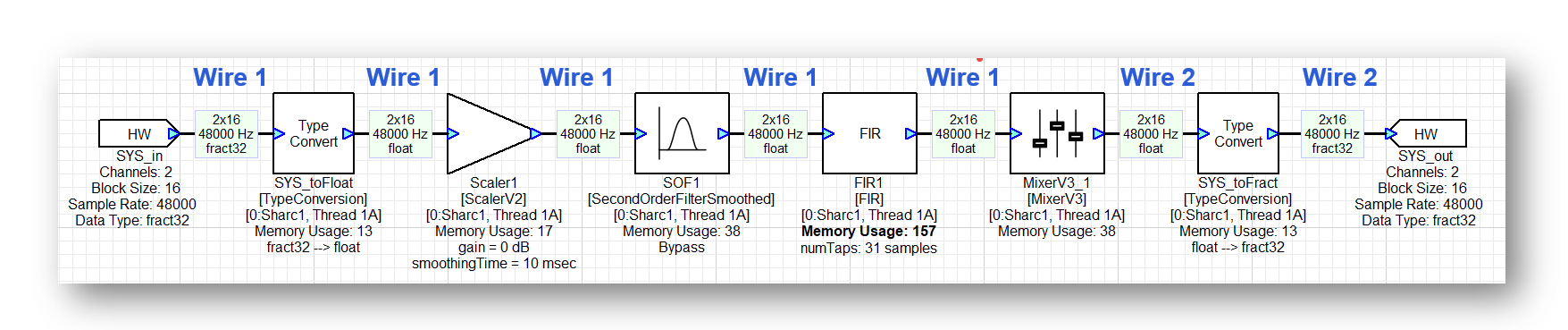

Wire Buffers

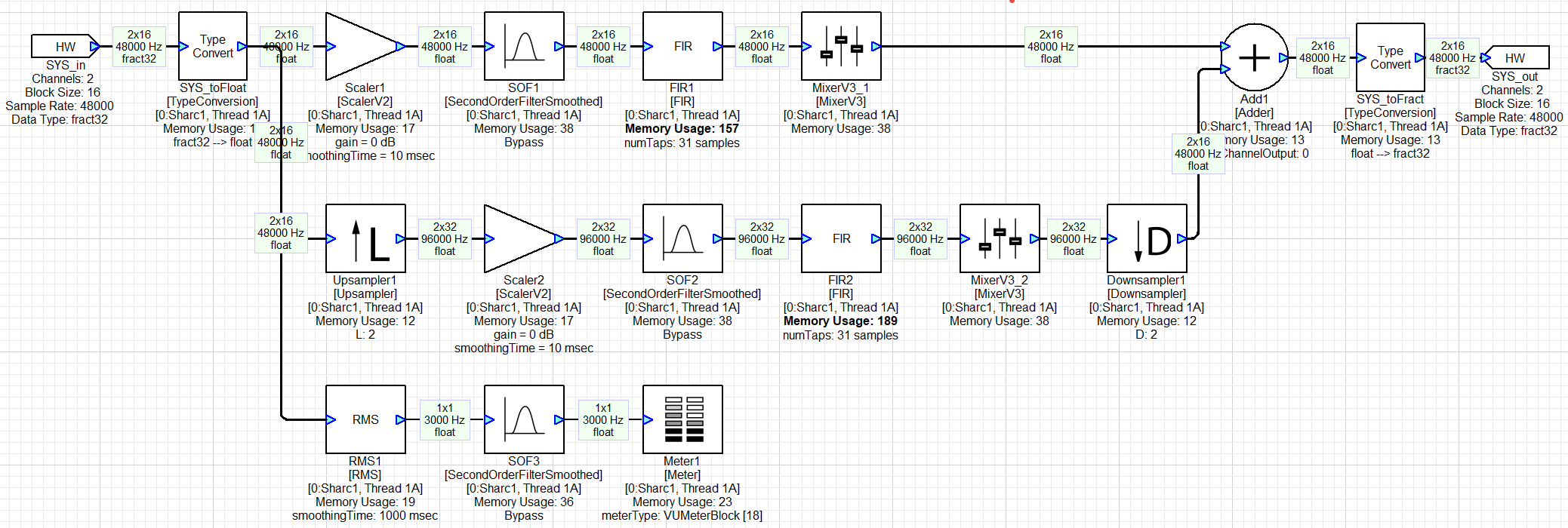

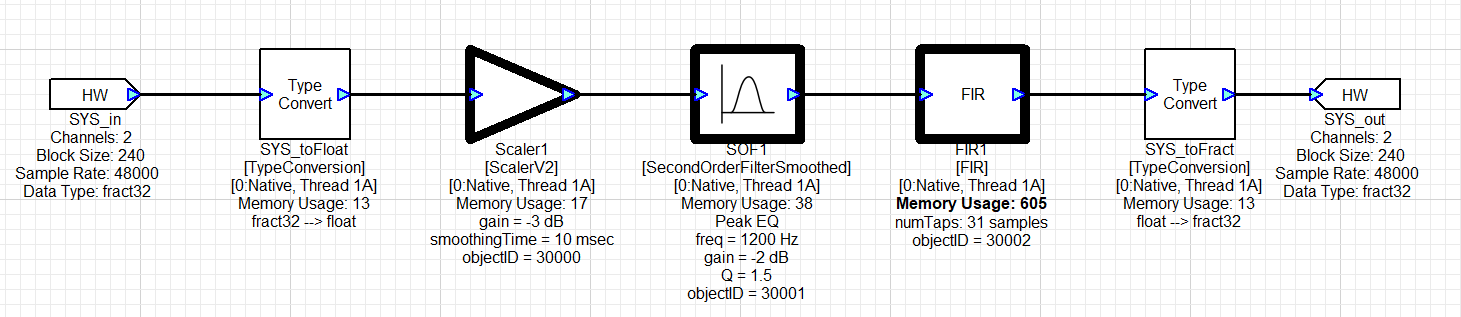

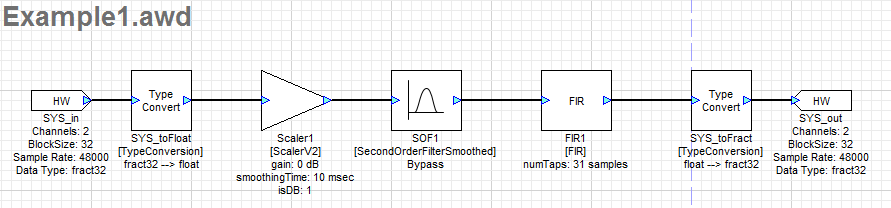

When Audio Weaver builds a system, it determines the execution order of the modules. This routing operation begins at the input pin of the system and proceeds module by module until the output pin is reached. If multiple modules can execute, then heuristics are used to determine which module is next. Consider the simple system shown in Figure 21 which contains hardware input and output pins, and 6 modules.

-

Audio arrives from the BSP on the SYS_in hardware input pin. The data is 32-bit fixed point (fract32), stereo, with a block size of 16 samples.

-

SYS_toFloat converts to a floating-point representation.

-

Scaler1 applies a gain in dB.

-

SOF1 is a Biquad filter with design equations.

-

FIR1 is a 31-point FIR filter.

-

MixerV3_1 is a 2-in / 2-out mixer with a configurable 2x2 mixing matrix.

-

SYS_toFract converts back to fixed-point.

-

SYS_out sends the data to the BSP.

All processing is with stereo data using a block size of 16 samples.

Figure . Simple Audio Weaver system containing 6 modules. On each wire buffer is shown (number of channels) x (block size), sample rate, and data type.

The modules in this simple system execute from left to right as shown in the figure. In Audio Weaver, wire buffers are used to hold intermediate results between modules, and these are marked in Figure 21. Whenever possible, Audio Weaver tries to reuse wire buffers to reduce the total amount of memory required by the system. If a module uses the same wire for input and output, then the processing is in-place. In this example, wire 1 is reused multiple times until we reach the Mixer module. The Mixer module is unable to do in-place processing[^4] and therefore Audio Weaver allocates Wire 2. Wire 2 continues to be used until the output of the system.

Layouts

An ordered list of modules in Audio Weaver is called a layout. The simple system above contains 6 modules in the layout (the HW input and output pins do not count) and the modules are ordered based on their execution order. On the target processor, a layout is presented as an array of N-pointers to module instance structures. When processing audio, Audio Weaver iterates through the modules in the layout and calls the associated processing function for each module. The input and output wires used by each module are preassigned at build time. Execution of a layout is very efficient, and it essentially involves calling the processing function of an ordered list of modules.

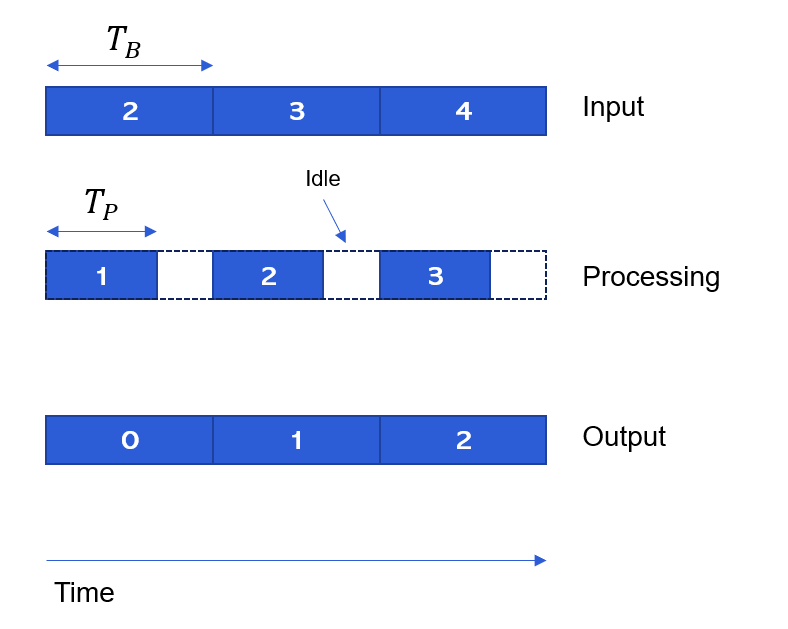

The processing of the 6 modules in the layout must be completed within 1/3 of a msec in order for the system to run in real-time. Figure 22 shows activity in different parts of the system. The top-section is the block-based input DMA, the middle-section is the processing, and the bottom-section is the output DMA. The numbers indicate the index of each block. While block 2 is being received, block 1 is being processed, and block 0 is being output.

Figure . Single threaded processing over time.

Since the modules all execute within the same layout, there is no added latency. The overall latency of this system is solely due to the I/O, and in this case is 2 x 16 = 32 samples at 48 kHz. Additional latency only occurs if it is explicitly caused by a module. For example, a delay module or an FIR filter with initial coefficients set to zero.

Profiling

Figure 22 also indicates two different times:

\(T_{B}\) = Time between DMA interrupts ("block time")

\(T_{P}\) = Time for the processing to complete ("processing time")

The Audio Weaver framework leverages on-chip clocks or counters to measure these times. The Target Information shown in Figure 7 has "Profile clock rate = 250 MHz", and thus this SHARC BSP measures time with an accuracy of 4 nanoseconds. With these time measurements, we can easily determine the overall loading of the system as:

CPU Load % = \(100 left( frac{T_{P}}{T_{B}} right)\)

The Audio Weaver framework also uses these timers to measure the execution time of each module in the layout and thereby provide detailed module-level profiling information.

The profiling scheme described above works when the processor is running at a fixed clock speed. Some processors -- like the Qualcomm Hexagon DSP -- do clock throttling. That is, they lower the clock speed based on the processor loading in an effort to reduce power consumption. The approach described above won't work in this case because the clock speed is lowered and the processor always appears full.

To fix this, Audio Weaver introduced a new profiling scheme in release 8.D.2.5. Instead of computing \(T_{B}\) empirically using a timer, it is instead determined based on first principles:

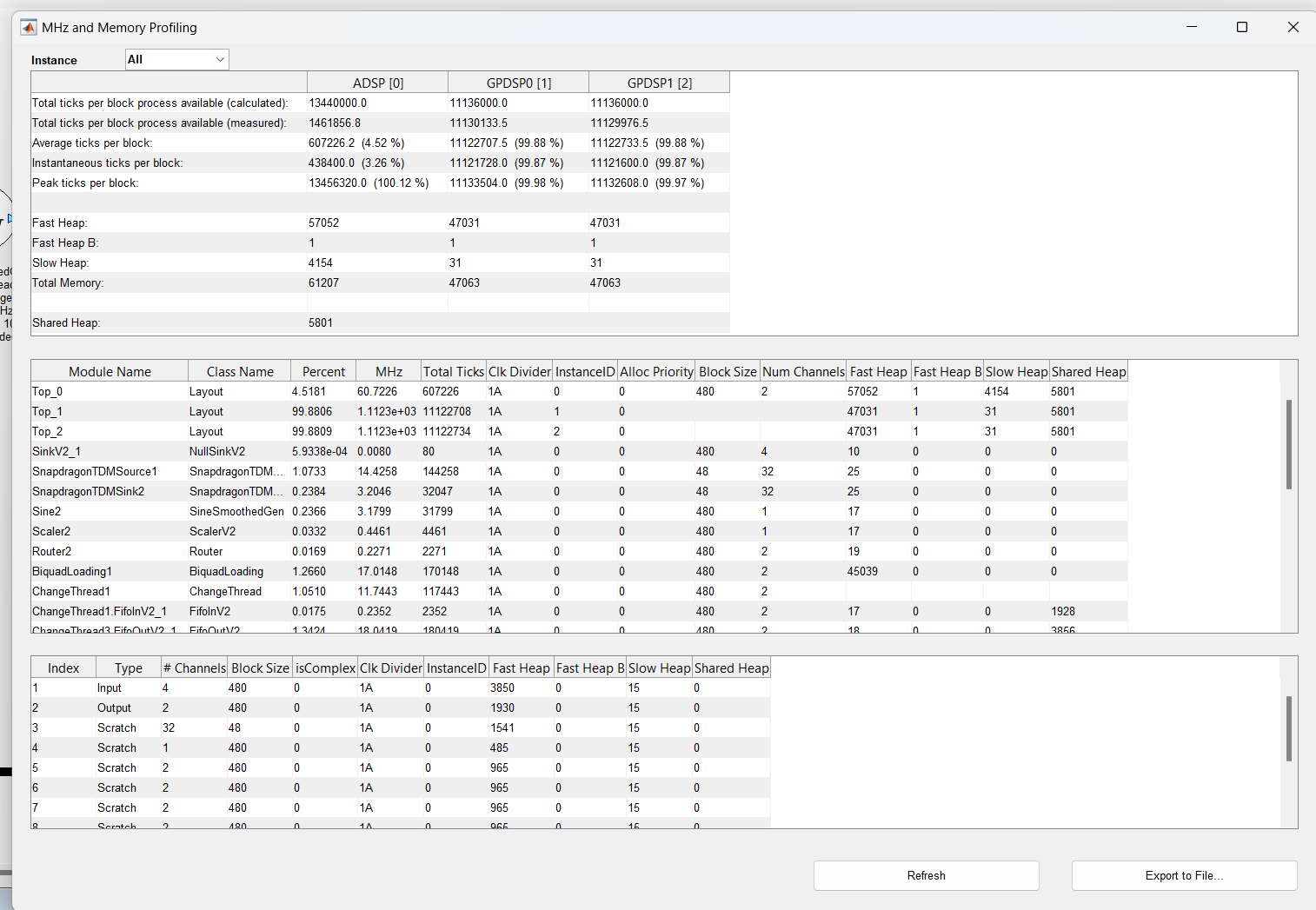

Here are some examples of what this looks like on the Hexagon. The processor speed is 1.344 GHz, sample rate is 48 kHz, and a block size of 480 samples. The block time is 10 msec and thus there are 13.44M cycles available. When the system is lightly loaded, we measure:

The processing takes 607k cycles (line 3). The processor clock has been reduced to 146.2 MHz and thus there are 1.462M clock cycles available (line 2). Using the traditional elapsed time method of profiling, we would report the CPU load as:

607k/1.462M = 41.5%

which is clearly wrong. The new method reports it as:

607k/13.44M = 4.52%

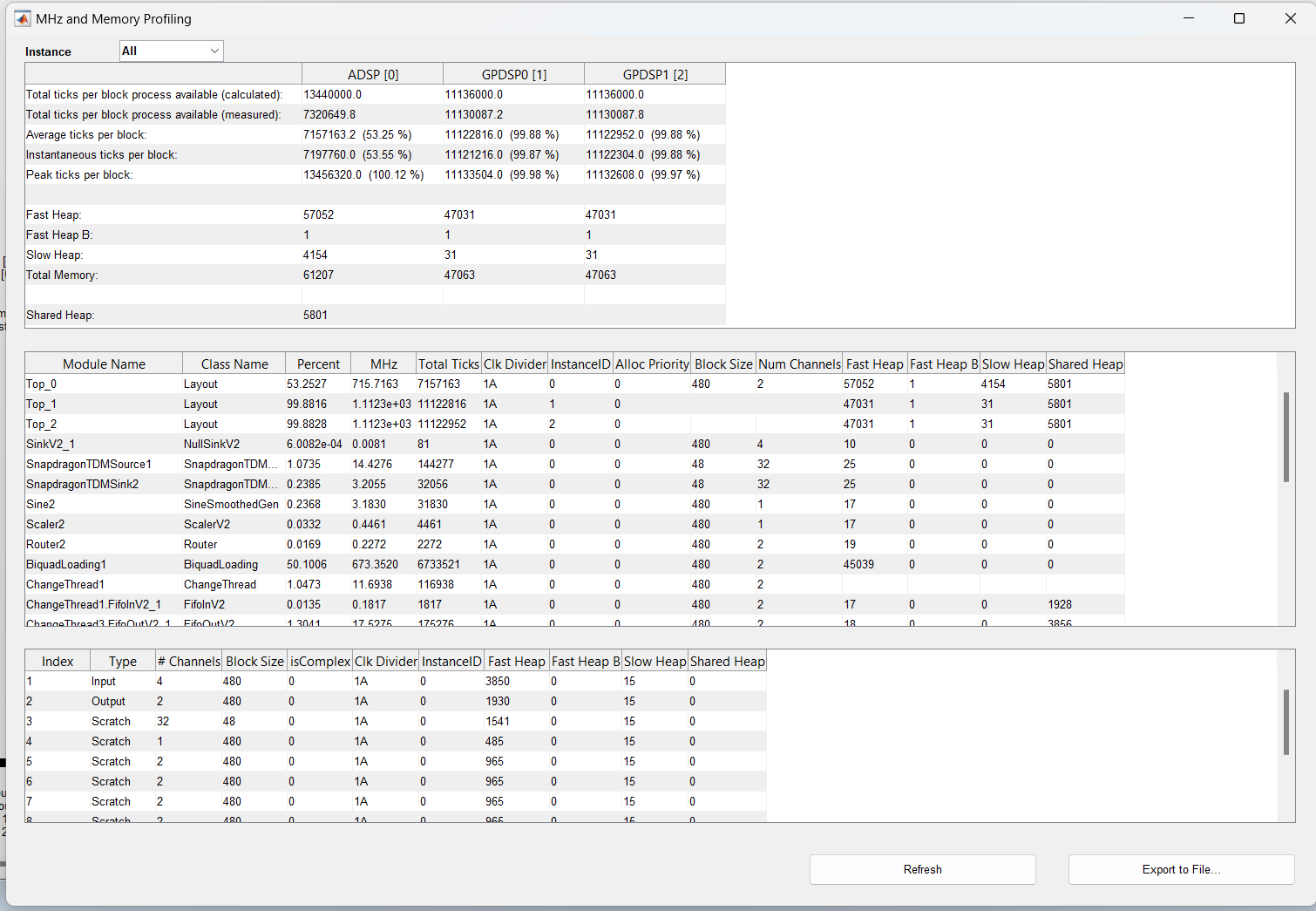

which is correct. When moderately loaded, we measure:

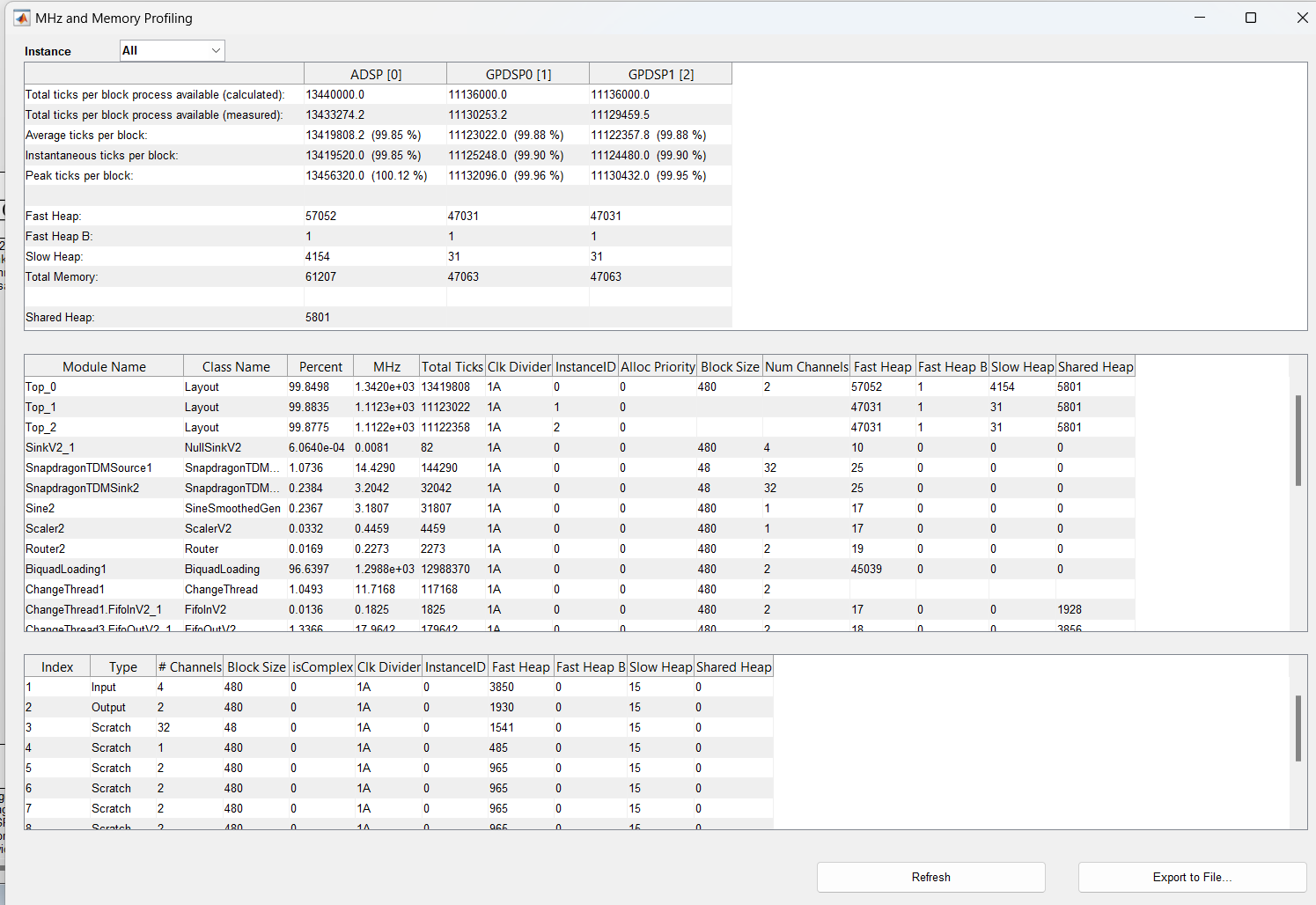

The processing requires 715.7 MHz (line 3) and the processor is running at 732MHz (line 2). We still correctly report the load as 53.25%. And finally, when the processor is heavily loaded, we now report 99.85%. In all cases, the reported load is correct even when the processor clock speed is varying.

Multirate Processing

Audio Weaver allows modules to change the block size of their output as long as they keep the block time the same as the input. Consider the system shown in Figure 23. The top path is the same as the simple system shown earlier. In the middle section, we upsample from 48 kHz to 96 kHz. The block size also doubles from 16 samples to 32 samples. The block time remains unchanged at 1/3 msec. On the bottom path, the RMS module computes the root-mean-square value of a 16 sample input block and outputs a single value. (Wires with a block size of 1 are called control wires in Audio Weaver.) The output updates every 1/3 msec which corresponds to a 3 kHz sample rate (shown on the wire). This value is filtered by SOF3 and shown on Meter1. As in the other 2 signal paths, the processing still occurs every 1/3 of a msec. Thus, this is still a single threaded system with a single layout. Hold tight; more sophisticated multithreaded processing is described in Section 3.2.

Figure . Basic multirate processing in a single threaded system.

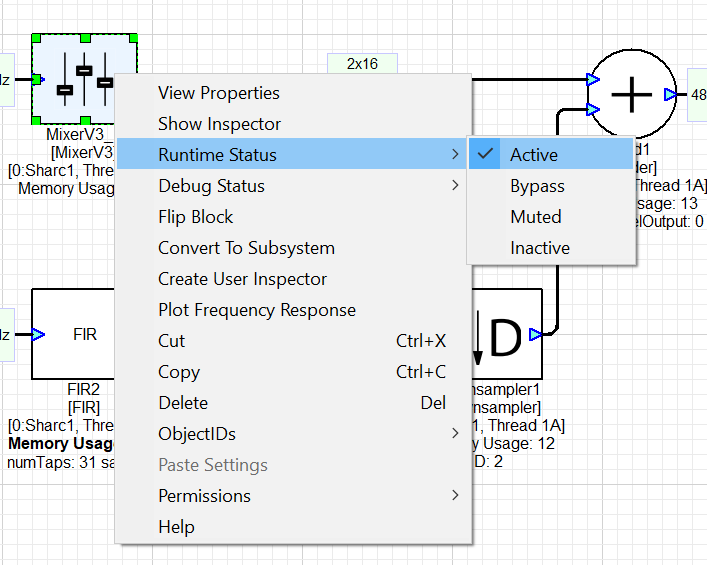

Module Status

For simplified debugging and run-time configuration, modules in Audio Weaver have a tunable module status. The module status can be changed during design time or at run-time by right-clicking on a module as shown below.

. There are 4 possible choices for the module status:

-

Active -- the module's processing function is called and is processing audio. This is the default behavior of a module when it is instantiated in Audio Weaver.

-

Bypassed -- the module's processing function is not called. Instead, the input wire data is copied to the output wire. Audio Weaver has a generic bypass function which works in the majority of cases. However, a module can define a custom bypass function, if needed (e.g., when bypassed, the Upsampler in Figure 23 continues to call its processing functions).

-

Muted -- the output wires are filled with all zeros.

-

Inactive -- nothing happens and the output wire buffer is unchanged.

When a module is Bypassed or Muted, the output wire buffers are still written and the module will consume some processing. The lowest processing occurs when the module in Inactive, but you must be careful. When Inactive, the module's output wire buffer is untouched and will contain whatever data was in there earlier. For example, consider the system shown in Figure 21. When Scaler1 is set to Inactive, it will essentially be Bypassed since the module operates in-place and Wire 1 holds Scaler1's input data. However, when SYS_toFloat is bypassed, Wire 1 holds fixed-point data. This will be treated as floating-point by Scaler1 and will result in very loud noise. Be careful when using Inactive mode and consider the possible consequences.

Multithreaded Processing

Single threaded processing is useful for basic audio designs. More sophisticated algorithms, or running multiple use cases simultaneously, require processing audio in multiple threads.

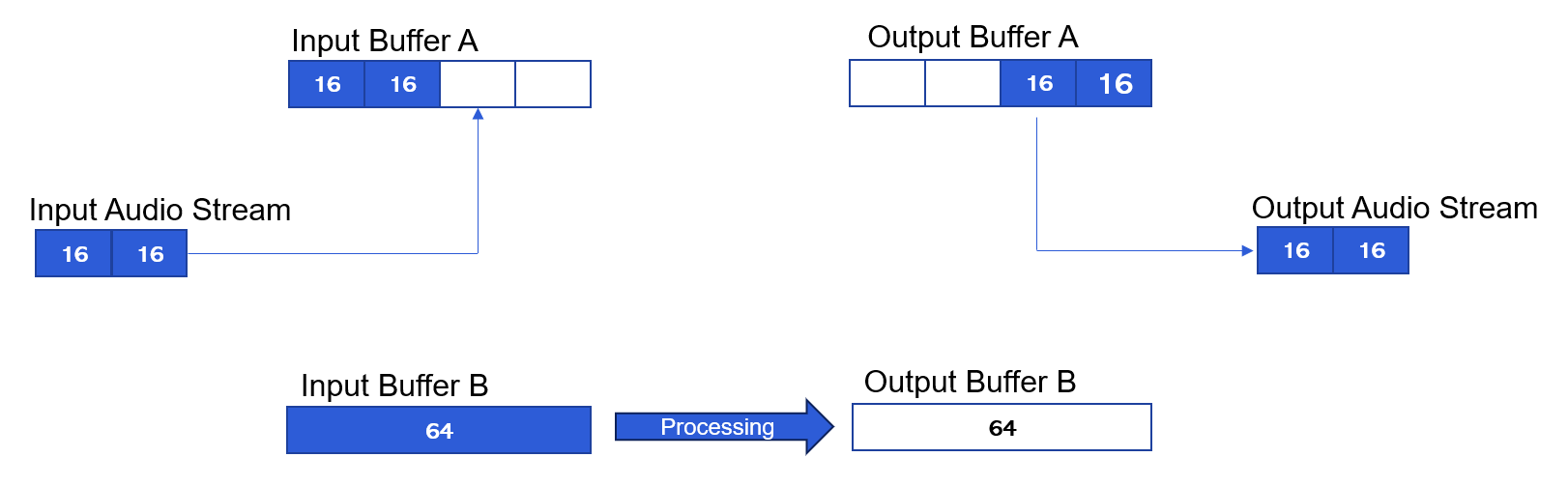

Suppose you are running road noise cancellation with a block size of 0.33 msec (16 samples at 48 kHz). In addition, you want to do playback processing on the same core. You could do the playback processing also at a 0.33 msec block size, but that would be inefficient. It would be better to do it at a larger block size, like 1.33 msec (64 samples). To achieve this requires a multithreaded implementation.

To switch from a block size of 16 samples to 64 samples requires double buffering as shown in Figure 24. There are 2 ping pong buffers with 64 samples each. Input Buffer A is filled 16 samples at a time while Input Buffer B is used for processing. After the input buffer is full (or output buffer is empty), then the buffers switch. Processing then occurs with Buffers A and I/O with Buffers B.

Figure . Double buffering required for multithreading. In the example, audio arrives at a block size of 16 samples and is buffered up to 64 samples.

Layouts and Clock Dividers

In Audio Weaver, the 16 and 64 sample processing occur in separate layouts. Each layout has an ordered list of modules to execute. The smallest block size in the system is triggered off the basic block size. Within this interrupt, the 16 sample processing occurs every call and every 4^th^ call we trigger a lower priority thread for the 64 sample processing. In Audio Weaver, we would state that the 64 sample layout is running at a clock divider of 4.

Processors with Hardware Threads

On a processor like the Hexagon which has hardware threads, these threads execute at the same time. The pattern of processing is as shown in Figure 25. Multithreading on the Hexagon increases the total amount of audio compute. You must use multiple threading to fully leverage the Hexagon.

Figure . Multithreaded activity in the Hexagon processor. The 2 block sizes execute in parallel on separate hardware threads.

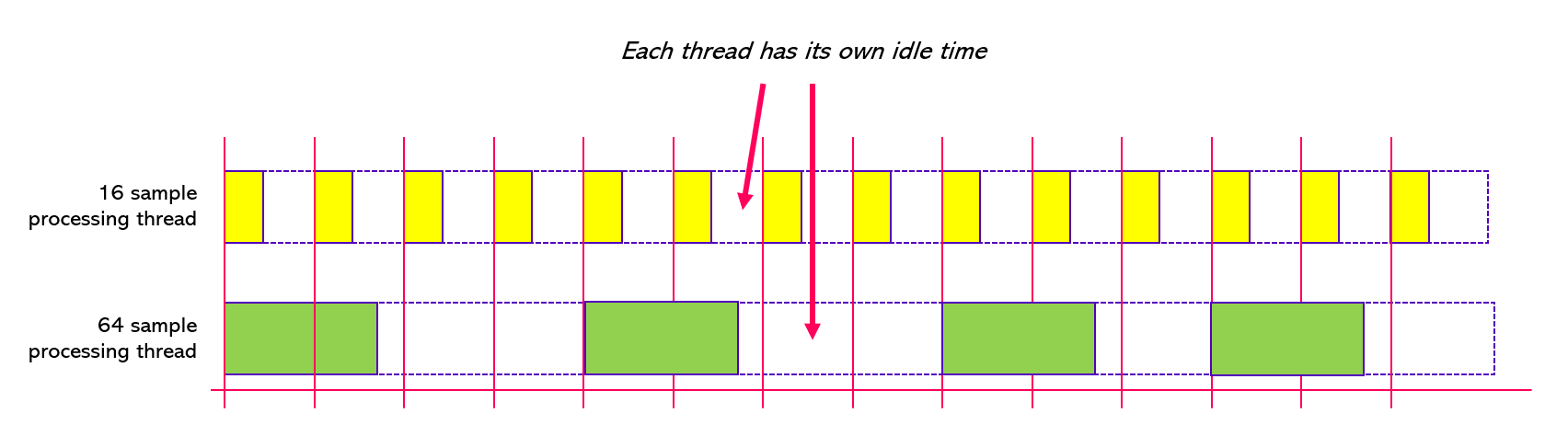

Processors without Hardware Threads

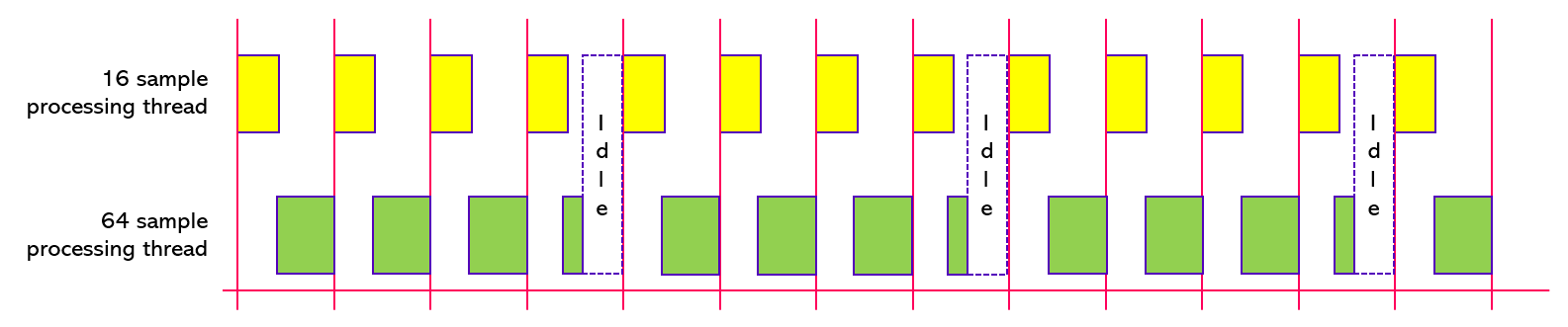

On a processor like the SHARC which doesn't have hardware threads, the processing occurs in separate software threads. The 16 sample processing operates in a higher priority thread and interrupts the 64 sample processing. The processor can only do 1 thing at a time and the pattern of processing is shown in Figure 26. Multithreading on the SHARC does not increase the total amount of audio compute available; it only allows you to process at different block sizes.

Figure . Multithreaded processing on the SHARC. It has only a single hardware thread and the high priority software thread (in yellow) interrupts and preempts the lower priority thread (in green).

The 16 and 64 sample processing are supposed to occur at the same time as shown above, but since the core can only do 1 thing at a time, the higher priority 16 sample processing occurs. Then, when the 16 sample processing is done, the 64 sample processing can begin. The 64 sample processing takes longer to execute and is repeatedly pre-empted by the higher priority thread. The processor is idle only after the 64 sample processing is done.

Measuring CPU load

Measuring the CPU load with parallel running hardware threads is simple. Just measure \(T_{B}\) and \(T_{P}\) for each thread and compute the statistics as before. You end up with a % loading for each hardware thread. With software threads, computing the loading is more difficult because the low priority thread is pre-empted by the high priority thread. Just measuring \(T_{B}\) and \(T_{P}\) for the low priority thread will include the time spent in the high priority thread. To avoid this, the Audio Weaver has additional code to ignore the time spent in higher priority threads.

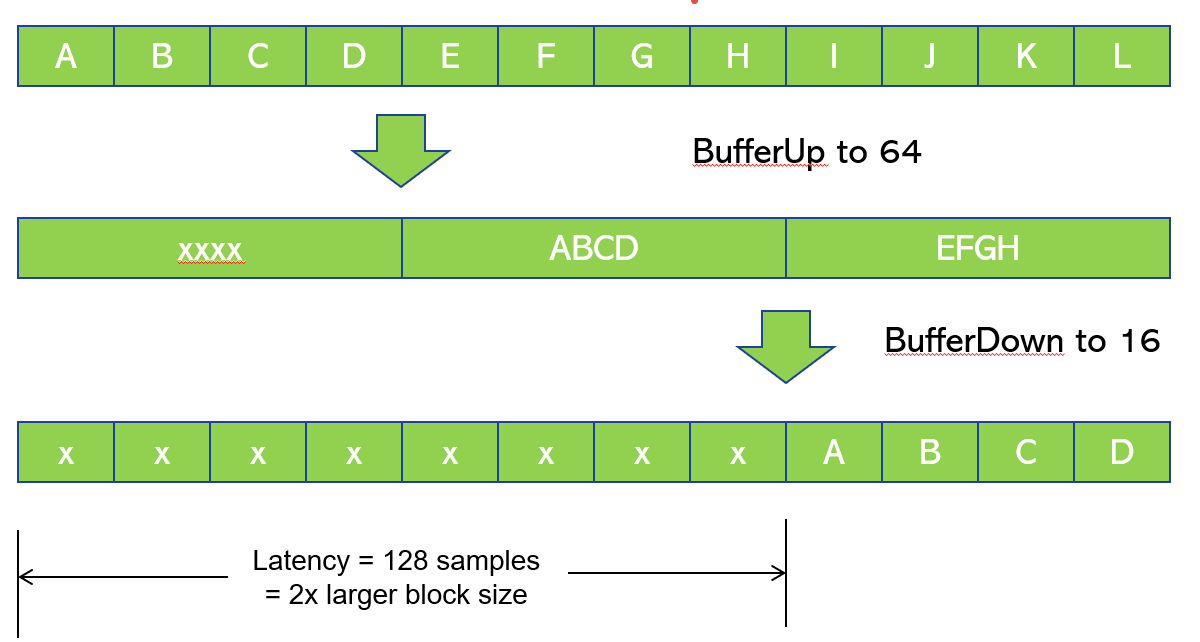

Latency Due to Multithreading Buffering

When implementing multithreading, you also need to consider the latency which occurs from double buffering. A detailed analysis is shown in Figure 27. 16-sample blocks A, B, C, and D arrive. Then the 64 sample block -- ABCD -- can be processed. After this is complete, the results A, B, C, and D can be output. The analysis shows that the delay from buffering up is 64 samples and buffering down adds another 64 samples. The net latency then is 128 samples, or 2.67 msec.

Figure . Latency that occurs from the buffering used in multithreaded systems.

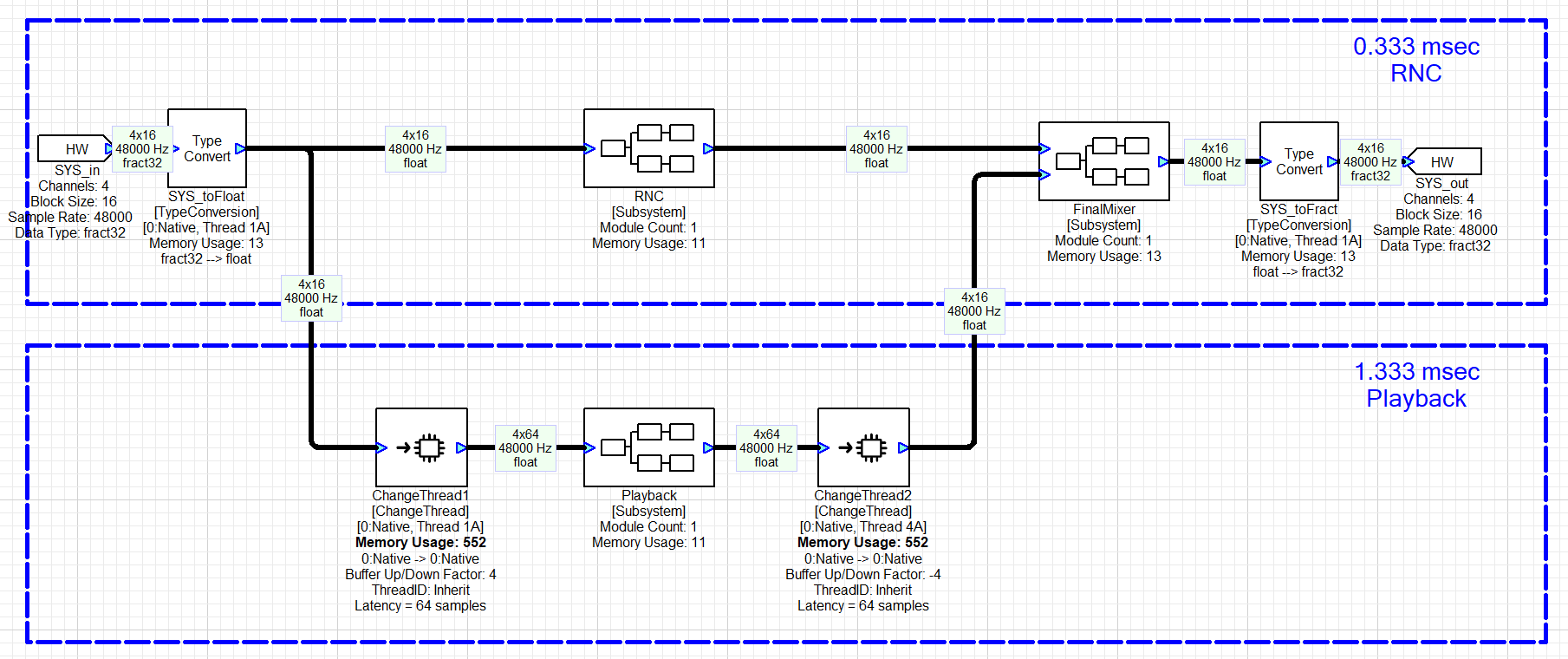

Audio Weaver ChangeThread Module

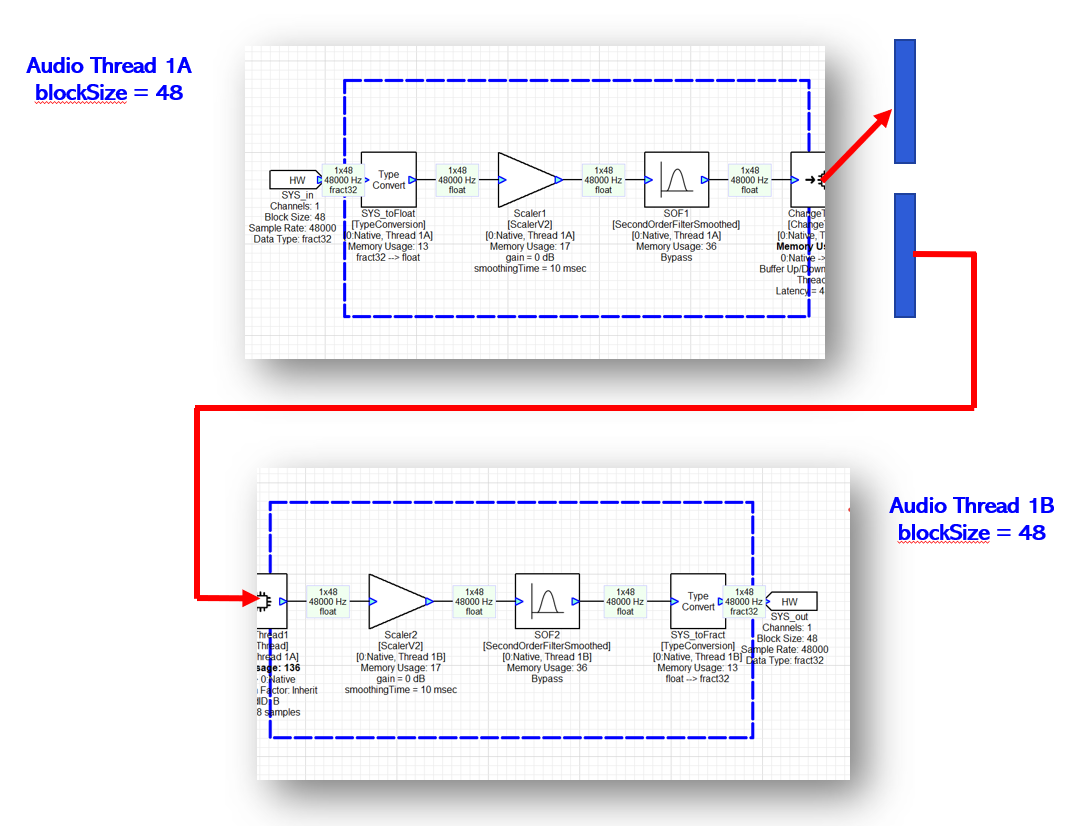

Thread management is performed in Audio Weaver using the ChangeThread module. This module includes an internal double buffer (twice the larger block size) and changes the thread identifier for downstream modules. During design time, you specify the number of blocks which should be accumulated (when buffering up) or subdivided (when buffering down). The thread information (and block size, sample rate, and number of channels) propagates through the signal flow from module-to-module. An example implementation with RNC at 0.33 msec and playback processing at 1.33 msec is shown in Figure 28.

Figure . Basic multithreaded system implemented in Audio Weaver. The high priority thread is in the upper path and has a block size of 0.33 msec. In the lower path, audio is buffered up to 1.33 msec, processed by Playback, and then buffered down to 0.33 msec.

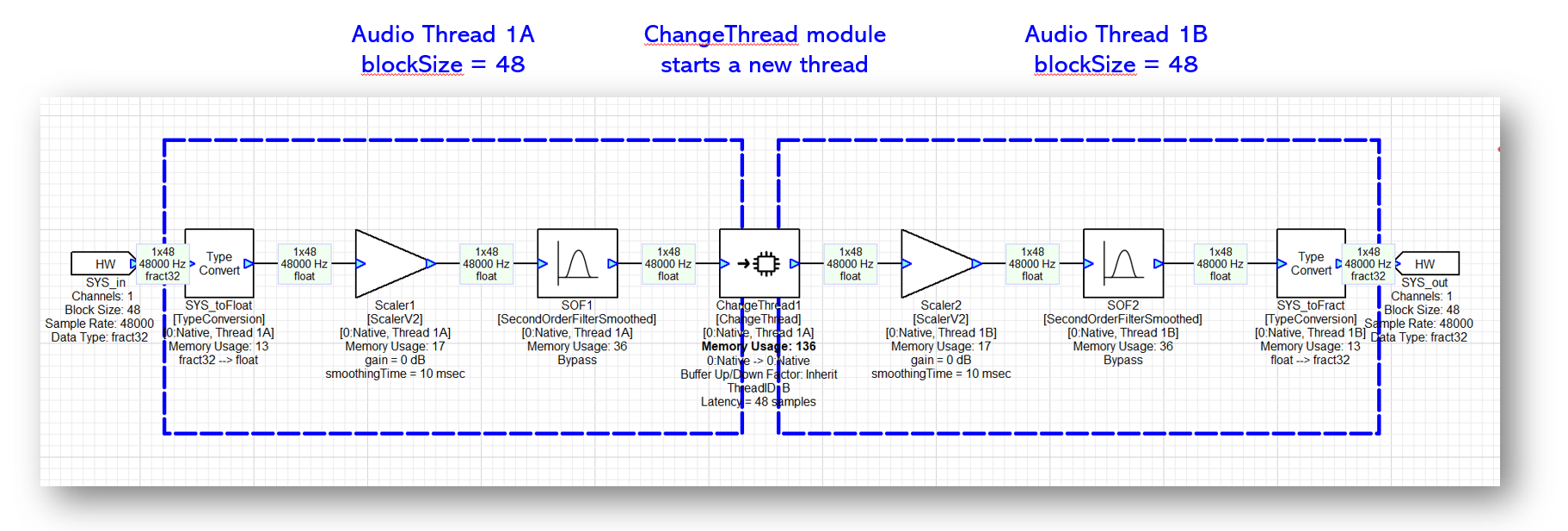

Multithreading at the same block size

The ChangeThread module also allows you to switch to another thread at the same block size. This is useful on the Hexagon (but not on the SHARC) because it increases the total available processing available. A basic 2 thread design is shown in Figure 29. The block size is 1 msec throughout the system. The first three modules execute in thread 1A while the last three modules execute in thread 1B.

Figure . Multithreading works even if you don't change the block size.

Keep in mind that the ChangeThread module still includes double buffering in this example, and that the 2 parts of the system execute in parallel as shown in Figure 30. The ChangeThread module also add 1 block of latency (48 samples) in this example.

Figure . In this multithreaded design, both threads process 1 msec of audio and execute in parallel.

awe_audioGetPumpMask()

This API is called by the main DMA handler (at the smallest block size) and returns a bit mask which indicates which audio thread(s) are ready to run. The signature for the function is

INT32 awe_audioGetPumpMask(AWEInstance* pAWE);

The least significant bit of the return value corresponds to the highest priority audio processing thread in the system.

For example, suppose that the system has a basic block size of 1 msec. Furthermore, assume that the system processes audio with block sizes of 1 msec, 2 msec, and 5 msec. The bit mask returned by the function will have the following values (shown in binary) during each 1 msec interrupt

111 // Call 0

001 // Call 1

011 // Call 2

001 // Call 3

011 // Call 4

101 // Call 5

011 // Call 6

001 // Call 7

011 // Call 8

001 // Call 9

111 // Call 10

The least significant bit is set every time so that the 1 msec audio thread is triggered every time.

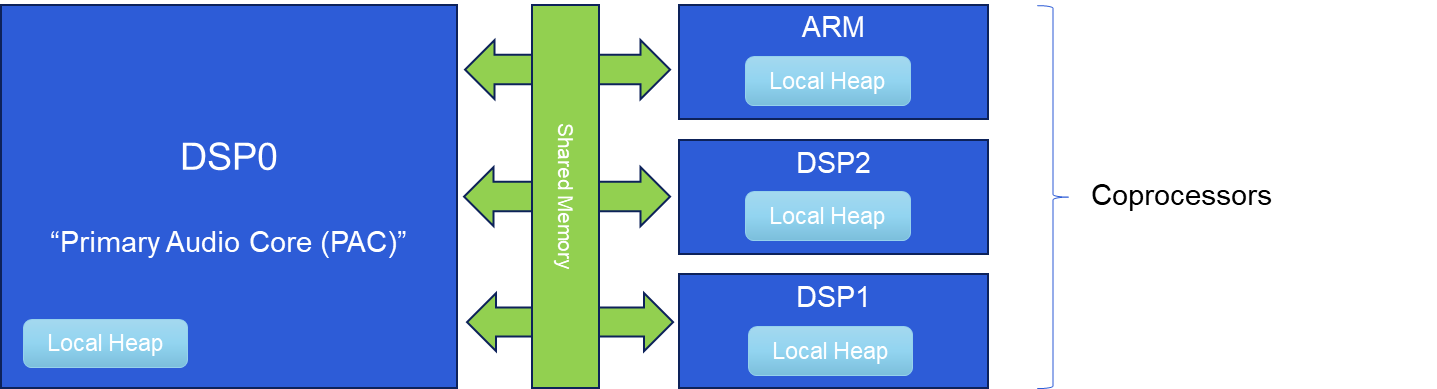

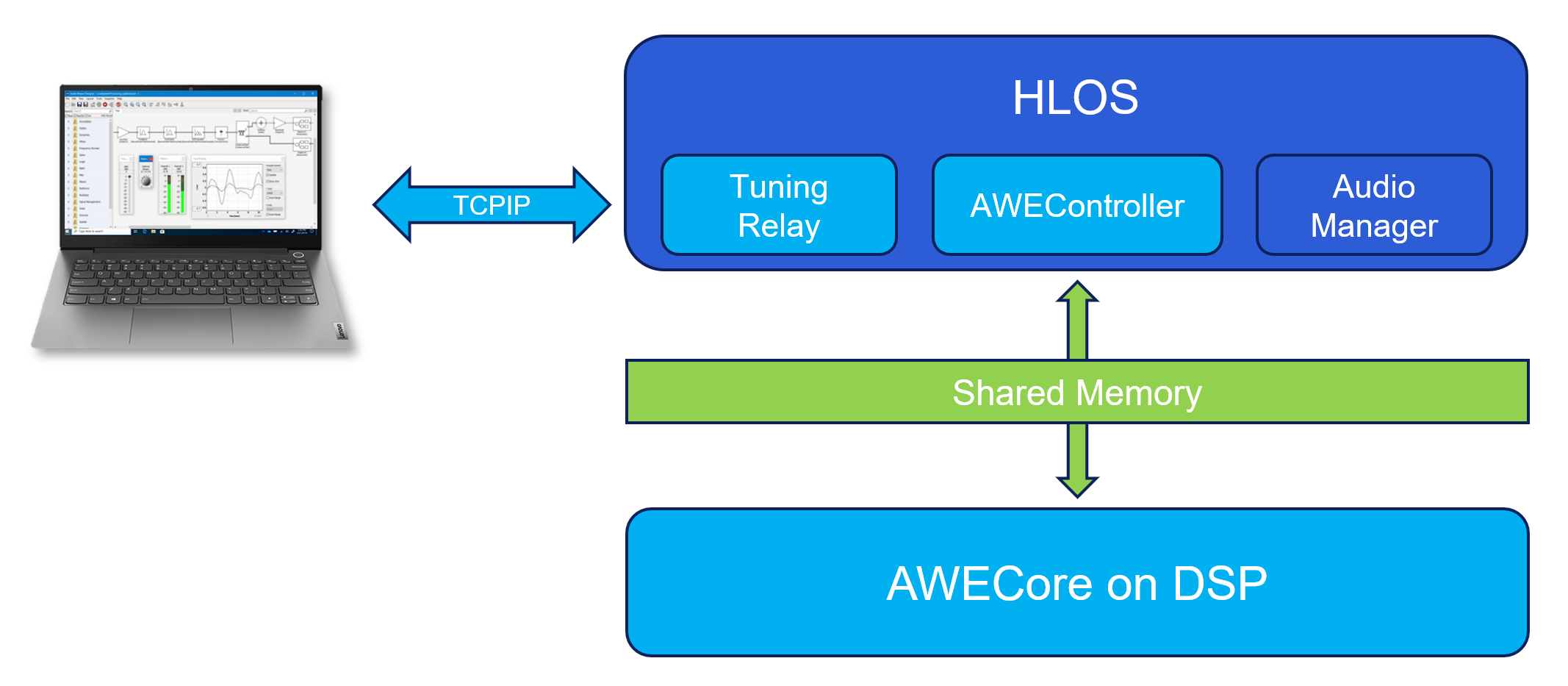

Multicore Processing

Audio Weaver also allows you to partition processing across multiple cores. The approach used is an extension of the multithreaded architecture described in the previous section. The design places relatively few requirements on the underlying hardware. We assume:

(1) Each processor has its own local tightly coupled memory which cannot be accessed by the other cores. This tightly coupled memory is used for speed intensive operations.

(2) The cores all have access to a common pool of shared memory.

(3) The cores are able to signal each other via interrupts.

(4) One or more of the cores have access to hardware peripherals for I/O.

This architecture even supports heterogeneous configurations of cores and is illustrated in Figure 31.

Figure . Internal SOC architecture needed to support heterogeneous multicore processing.

DSP0 is the "Primary Audio Core (PAC)" and performs I/O at the basic block size. The other "Coprocessors" process audio at multiples of the basic block size. The Coprocessors are signaled by the PAC based on their clock dividers. The PAC is essentially the conductor which keeps the entire cluster of processors synchronized.

The cores communicate via double buffers as before in the multithreaded design. The only difference is that the double buffers are allocated in shared memory. While one buffer is being filled, the other is being used for processing.

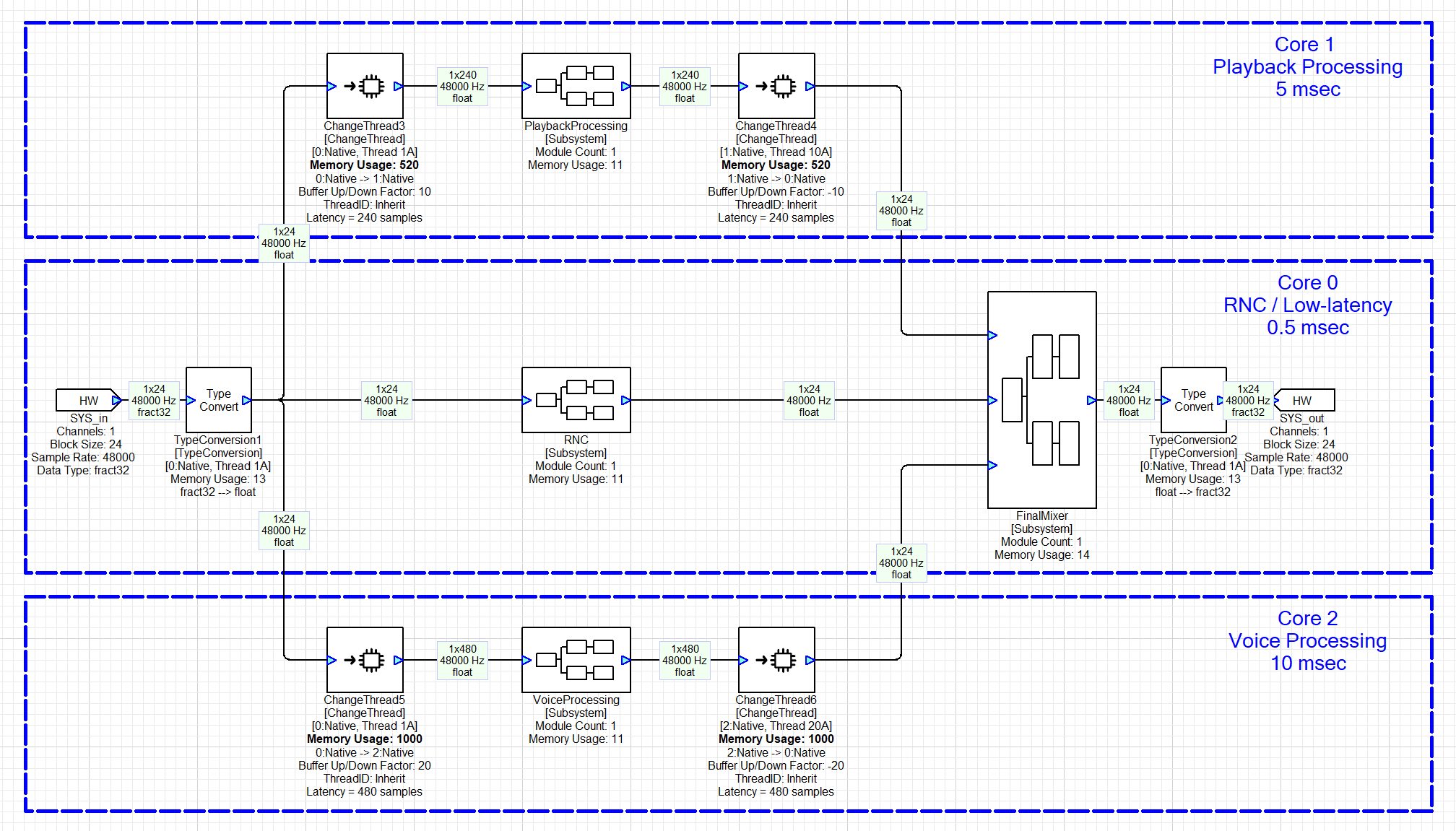

The ChangeThread module which was introduced earlier also allows you to specify the target core ID of subsequent processing. The target core ID is then propagated to downstream modules just as the threadID was propagated earlier. A useful feature of the ChangeThread module is that you can change the block size (buffering up or buffering down) at the same time as crossing to another core. Figure 32 shows an example of how the ChangeThread module is used. Core 0 is the interrupt controller and implements low latency RNC and a final mixer / limiter at a block size of 0.5 msec. Core 1 performs playback processing with a block size of 5 msec while Core 2 does voice processing with a block size of 10 msec.

Figure . Extension of Audio Weaver processing to multiple cores. The ChangeThread module is able to simultaneously span core boundaries and change block sizes.

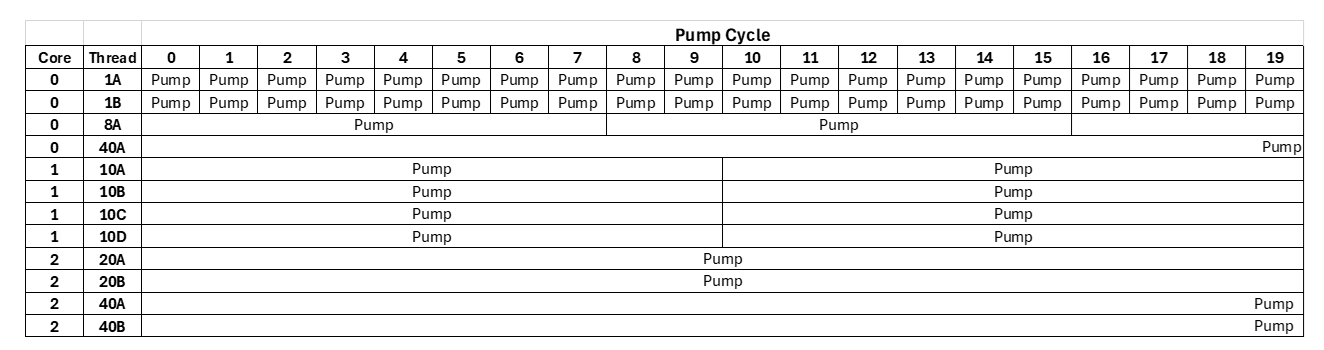

Synchronous Operation

Audio Weaver's real-time architecture is based on fully synchronous operation. Audio processing occurs in fixed block sizes, and block sizes through the system are multiples of the basic block size of the system. This provides consistent CPU loading (no spikes), fixed scheduling, and efficient use of computational resources.

The PAC manages DMA at the basic block size of the system. After every buffer is received, the PAC calls the function

INT32 awe_audioIsReadyToPumpMulti(AWEInstance* pAWE, UINT32 instanceID);

where the second argument instanceID is the core ID of the Coprocessor. This function returns a Boolean which indicates whether a thread on the Coprocessor is ready to pump. If true, then the PAC will signal the Coprocessor to process audio.

Audio Weaver's multicore architecture allows you to leverage additional threads within each core. In the 3 core design shown in Figure 32, suppose that each core contained the following block sizes / clock dividers:

-

Core 0: 0.5 msec, 0.5 msec, 4 msec, 20 msec

-

Core 1: 5 msec, 5 msec, 5 msec, 5 msec

-

Core 2: 10 msec, 10 msec, 20 msec, 20 msec [Core 2 wakes up every 10 msec based on IPCC]

-

Core 3: 4 msec, 10 msec. [GCD = 2 msec. Core 3 wakes up every 2 msec.]

-

Core 4: 10 msec, 11 msec. [GCD = 1 msec. Core 4 wakes up every 1 msec.]

Processing is fully synchronous and triggered by the 0.5 msec interrupt controller. The system wide pattern of audio processing ("pumping") is shown in Figure 33.

Figure . System with 3 cores and each core implements 4 threads at different block sizes.

This architecture imposes some restrictions as to how block sizes can be used. Here are some examples:

-

An algorithm cannot change its block size on the fly at run-time. (To realize this, you'll need to operate the two algorithms in parallel and Active / Inactivate the processing at run-time. You'll need twice the memory, but the CPU load is constrained.)

-

Algorithms with block sizes of 240 and 256 samples cannot be directly cascaded. (To accomplish this, you would need a basic block size of 16 samples. You would have to first buffer down from 240 to 16 samples and then buffer up from 16 to 256 samples. This places the modules into layouts with clock dividers to 15 and 16.)

-

Asynchronous sample rate conversion cannot be supported (easily) in the design since wires have fixed block sizes.

Resynchronization

The AWECore processing library has internal logic which checks for CPU overruns (not completing processing in time for the next interrupt), and when detected, it pauses pumping and resynchronizes all threads.

Part of the resynchronization logic is in the function awe_audioGetPumpMask(). In the logic that computes the pump mask, we check to make sure that any thread to be started has finished its previous pump cycle. If it hasn't, then we set a "PUMP_OVERFLOW" bit in the layout structure and do not set the corresponding bit in the layout mask (that is, don't start this thread again). When an overflow occurs in a layout on some core, then we share this information with the PAC by setting an overrun bit set in a dedicated location in the shared heap for that core.

Another possible overrun is with FiFoIn and FiFoOut in ChangeThread module. In multi-core system, FiFoIn runs on one core and FiFoOut runs on a different core. In normal operation, they both remain synchronized, i.e. FiFoIn writes into first half while FiFoOut reads from the second half of the double buffer in shared heap. If either FiFoIn or FiFoOut is delayed due to other system events, this is detected as an overrun and a flag in the dedicated shared heap header set to indicate overrun.

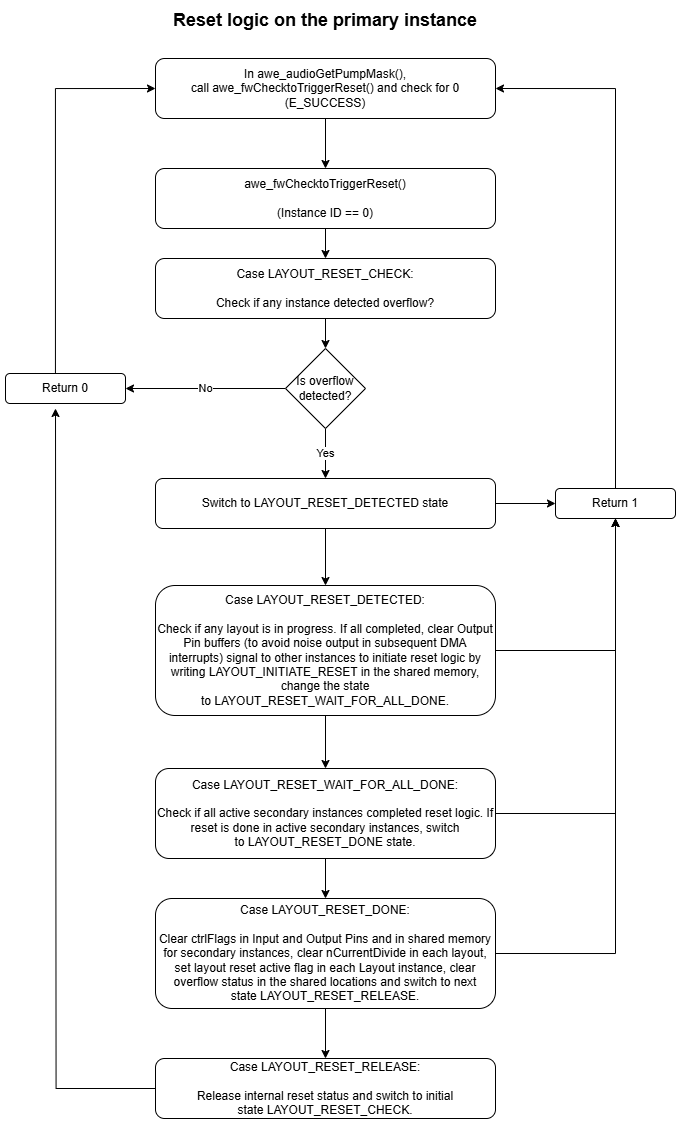

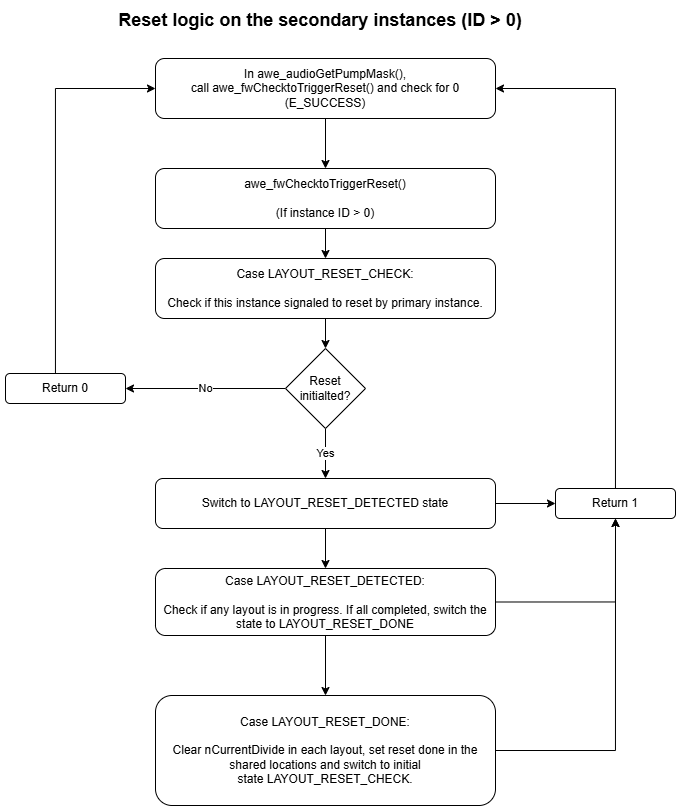

The awe_audioGetPumpMask() function on the PAC (instance 0) keeps checking the shared heap for any overruns occurring on any core. If an overrun detected anywhere in the system, the resynchronization state machine starts and acts for every call to awe_audioGetPumpMask. First instance 0 signals all other instances to start resynchronization. Then all instances wait until all the current pumps to complete. Depending on the load of each instance, it may take a variable number of fundamental blocks to complete this state. Then in the next fundamental block period, instance 0 resets hardware I/O pin double buffer flags and resets all the layout flags. Then instance 0 waits for all other instances to complete reset. After one block of fundamental block period, after all instances completed resynchronization, then the normal execution takes place. The following flow diagram provides more details on state machine in the PAC and Coprocessor instances

.

.

Deferred Processing

Audio Weaver contains a separate Deferred Processing Thread for handling non-real-time module operations. The Deferred Processing Threads runs at a priority lower than any of the real-time audio processing threads. This ensures that control and tuning operations do not interfere with real-time audio.

Module Architecture

This section describes the architecture of individual modules that can be used by Audio Weaver. It provides a high-level understanding of the design and capabilities of modules. More detailed information aimed at module developers is found in the Audio-Weaver--Module-Developers-Guide.

Code and data for a module can be roughly divided into two categories. Information which resides on the PC and enables the module to be used in Designer. This includes graphical information, variable details, GUI inspector, and so forth. This information is contained in either MATLAB code or separate files. The second part of a module is code which runs on the target processor (or inside the Native PC simulator). The components are:

On the PC

-

ClassName_module.m -- MATLAB code which describes the pins, variables, and Designer behavior of the module. A class-based approach is used with multiple internal methods. This is the source of truth.

-

ClassName.xml -- XML code which is read by Designer. This is used to populate the Module Browser found on the left-hand side of the Designer GUI. This is auto-generated based on the _module.m file.

-

ClassName.bmp -- bitmap file used in the Module Browser.

-

ClassName.svg -- Vector graphics file used to draw the module on the Designer canvas.

-

ClassName.html -- Help documentation.

On the target

-

ModClassName.c -- C code with multiple internal functions:

-

Constructor() -- for memory allocation

-

Process() -- real-time processing

-

Set() / Get() -- for run-time control

-

Bypass() -- defines the module's bypass functionality

-

-

ModClassName.h -- Header file with module structure definitions

On the PC, your custom modules will be contained in DLL's which are loaded by the Server upon startup[^5]. The DLL contains the run-time code (from ModClassName.c) and also "schema" information which is a text-based description of the module's instance variables.

Arguments and Variables

Arguments in Audio Weaver are module configuration settings which affect memory allocation and wiring. Arguments can only be set at design time and not when the system is running. Examples are:

-

Length of an FIR filter.

-

Number of stages in a Biquad filter.

-

Decimation factor of the Downsampler module.

-

Number of input pins on an Interleaver module.

Variables exist on the target and are available to the Process() and Set() functions. Variables can be further classified based on how they are used:

Parameters -- set by the user (e.g., the frequency, gain, and Q of a peaking filter).

Derived -- internal variables which are computed by a module's Set() function (e.g., Biquad coefficients computed based on frequency, gain, and Q of a peaking filter).

State -- internal variables which are modified by a module's Process() function (e.g., the 2 state variables used by a direct form 2 Biquad filter).

Arguments exist primarily in the MATLAB but may be assigned to variables when needed by the run-time code.

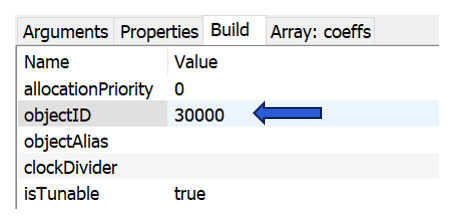

Module Instance Structure

Each module has an associated instance structure which holds its variables. The instance structure consists of a common header used by all modules, followed by module specific variables. Here are some examples:

Abs -- computes the absolute value of a signal. No tunable parameters.

typedef struct _awe_modAbsInstance

{

ModuleInstanceDescriptor instance;

} awe_modAbsInstance;

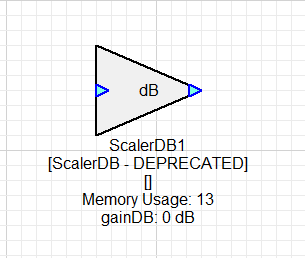

ScalerDB -- Scaler with configurable gain in dB. No smoothing[^6].

typedef struct _awe_modScalerDBInstance

{

ModuleInstanceDescriptor instance;

FLOAT32 gainDB; // Gain in dB.

FLOAT32 gain; // Gain in linear units.

} awe_modScalerDBInstance;

ScalerNV2 -- N channel scaler with per channel gain settings. Smoothed.

typedef struct _awe_modScalerNV2Instance

{

ModuleInstanceDescriptor instance;

FLOAT32 masterGain; // Overall gain to apply.

FLOAT32 smoothingTime; // Smoothing time constant (msec).

INT32 isDB; // Selects between linear(=0) and dB(=1)

FLOAT32 smoothingCoeff;// Smoothing coefficient.

FLOAT32* trimGain; // Array of trim gains, one per channel

FLOAT32* targetGain; // Computed target gains in linear units

FLOAT32* currentGain; // Instantaneous gains.

} awe_modScalerNV2Instance;

Every module starts with the common header:

ModuleInstanceDescriptor instance;

This is the base class of the module and contains information common to all modules (access to wires, module status, etc.). Note that the instance structures don't contain information about number of wires, block sizes, number of channels, or sample rate. This information is encoded into the wire buffers and queried by the module functions. You can see this in the Process() function for the ScalerDB module shown below:

void awe_modScalerDBProcess(void *pInstance)

{

awe_modScalerDBInstance *S = (awe_modScalerDBInstance *)pInstance;

**WireInstance **pWires = ClassModule_GetWires(S);**

UINT32 channels, blockSize;

**UINT32 numPins = ClassModule_GetNInWires(S);**

UINT32 pin;

FLOAT32 *src,*dst;

for(pin=0;pin<numPins;pin++)

{

src = (FLOAT32 *)(pWires[pin]->buffer);

dst = (FLOAT32 *)(pWires[numPins+pin]->buffer);

**channels = ClassWire_GetChannelCount(pWires[pin]);**

**blockSize = ClassWire_GetBlockSize(pWires[pin]);**

/* The same gain is used for all channels */

awe_vecScale(src, 1, dst, 1, S->gain, blockSize*channels);

}

}

Figure . Process() function for the ScalerDB module. The highlighted lines show how common module information (number of pins, channels, and block size) can be queried via macros.

Module C Functions

Modules have several C functions which control their behavior. Every module needs a Process() function while the others are optional.

Constructor()

This is an optional function and usually is needed if the module requires additional memory allocation or extra initialization. If a module only contains scalar variables in the instance structure (like the ScalerDB module above), then a Constructor() is not required. The ScalerNV2 module shown above has several array variables whose size is based on the number of channels. Here is its Constructor():

ModInstanceDescriptor *awe_modScalerNV2Constructor(INT32 * FW_RESTRICT retVal, UINT32 nIO, WireInstance ** FW_RESTRICT pWires, size_t argCount, const Sample * FW_RESTRICT args)

{

awe_modScalerNV2Instance *S = (awe_modScalerNV2Instance *) BaseClassModule_Constructor((ModClassModule *) &awe_modScalerNV2Class, retVal, nIO, pWires, argCount, args);

// Check if BaseClassModule_Constructor() finished properly. If not,

// the error code is in *retVal

if (S == NULL)

{

return 0;

}

#if defined(AWE_PROCESSOR_LOCK)

{

UINT32 nID = S->instance.instanceDescriptor.nUniqueInstanceID;

*retVal = nID;

awe_fwSetPackedVersion(retVal);

if (*retVal == nID)

{

*retVal = E_SUCCESS;

}

else

{

*retVal = E_CRC_ERROR;

return 0;

}

}

#endif

if ((S->trimGain = (FLOAT32 *) awe_fwMalloc(ClassWire_GetChannelCount(pWires[0]) * sizeof(float), AWE_HEAP_FAST2SLOW, retVal)) == 0)

{

// Error code is in *retVal

return 0;

}

if ((S->targetGain = (FLOAT32 *) awe_fwMalloc(ClassWire_GetChannelCount(pWires[0]) * sizeof(float), AWE_HEAP_FAST2SLOW, retVal)) == 0)

{

// Error code is in *retVal

return 0;

}

if ((S->currentGain = (FLOAT32 *) awe_fwMalloc(ClassWire_GetChannelCount(pWires[0]) * sizeof(float), AWE_HEAP_FAST2SLOW, retVal)) == 0)

{

// Error code is in *retVal

return 0;

}

return ((ModInstanceDescriptor *) S);

}

The call to BaseClassModule_Constructor() allocates memory for the module instance structure, assigns all scalar variables, and assigns wire information. This is then followed by 3 calls to awe_fwMalloc() to allocate memory for the gain arrays. The number of channels is determined by the macro ClassWire_GetChannelCount() and memory is allocated from the Audio Weaver heaps. More information about Audio Weaver heaps can be found in Section 4.4.

After the Constructor is complete, memory has been allocated for the array variables, but they have not yet been initialized to their default values.

Process()

Required function for every module. This implements the processing, and the code for the ScalerDB module was shown earlier in Figure 34. For greatest flexibility, you should write modules so that they can support an arbitrary block size and number of channels. A module's wire restrictions are specified in MATLAB code and MATLAB enforces that these conditions at build time. Your module's C code does not have to do additional checking of the wire properties.

The module processing function is usually written in C code. If you need additional performance, you can call hand optimized functions written in assembly. DSP Concepts' library of optimized functions is called the Vector Library. It contains a wide variety of low-level primitives like Biquad filters, Scalers, Smoothed Scalers, FIR filters, and so forth, that have been optimized for many different processor architectures.

Some processors include specialized hardware to accelerate specific functions like ML inference, FFT, IIR, and matrix accelerators. You can call these processor-specific APIs from your module code. Audio Weaver's SHARC library leverages some accelerators.

Bypass()

This is an optional function if a module needs special behavior when it is bypassed. The function header is exactly the same as the Process() function. For example, when bypassed, the TypeConvert module continues to call its Process() function. Not doing so would lead to serious distortion.

Set()

Used for run-time control and allows you to convert tunable parameters to derived variables. The ScalerDB module demonstrates how this works. The tunable variable gainDB needs to be applied as a linear gain. Instead of converting in the module's Process() function (this is wasteful of CPU), it is better to define a Set() function as shown below:

UINT32 awe_modScalerDBSet(void *pInstance, UINT32 mask)

{

awe_modScalerDBInstance *S;

S = (awe_modScalerDBInstance *)pInstance;

S->gain = undb20f(S->gainDB);

return 0;

}

The Set() function has two input arguments. The first is a pointer to the module's instance structure. The second is a mask which indicates which variable(s) in the module have been changed. This allows you to do selective computation in the Set() function. The constant MASK_ScalerDB_gainDB is defined in the module's header file (ModScalerDB.h).

The Audio Weaver framework is designed to directly set variables in module structures during run-time control or tuning. When controlling the ScalerDB module, the framework would first write gainDB and thereafter call the Set() function. We go into more detail into the Audio Weaver control architecture in Section 5.

The module's Set() function is called automatically after its Constructor() with mask = 0xFFFFFFFF. This is used to compute default derived values at startup. The Set() function is also called with mask=0 when the system is being destroyed. This can be used by the module if it needs to cleanup before shutting down (e.g., close a file or deallocate memory that was allocated outside of the Audio Weaver heaps).

Get()

This module function is rarely used by has complementary behavior to the module's Set() function. The Get() function is used to do a specific calculation before reading back an instance variable. For example, the SoftClip module applies a point nonlinearity to a signal. If you want to know the specific gain which is applied (the currentGain variable in the instance structure), then you need to perform a division. Instead of burdening the Process() function with this math, you can define a Get() function as shown below:

UINT32 awe_modSoftClipGet(void *pInstance, UINT32 mask)

{

awe_modSoftClipInstance *S = (awe_modSoftClipInstance *)pInstance;

WireInstance **pWires = ClassModule_GetWires(S);

INT32 numChannels = ClassWire_GetChannelCount(pWires[0]);

INT32 ch;

for (ch = 0; ch < numChannels; ch++)

{

if (S->lastIn[ch] != 0.0f)

{

S->currentGain[ch] = S->lastOut[ch] / S->lastIn[ch];

}

}

return 0;

}

Memory Allocation

Audio Weaver manages 4 internal memory heaps that can be used by modules. This design is specifically for DSP processors which have different internal and external memory blocks. The heaps are called: FAST, FASTB, SLOW, and SHARED. FAST and FASTB are typically in internal memory and support the dual access required to efficiently implement FIR filters. FAST would hold the filter coefficients and FASTB would hold the state variables. SLOW is usually much larger and mapped to external memory. Finally, SHARED memory is a recent addition and is available only in SOCs that support shared memory. The SHARED heap is used to implement interprocessor communication via the Change Thread module.

FAST, FASTB, and SLOW are local to each audio processor core. Only the SHARED memory is visible to everyone. Often FAST and FASTB are placed in TCM for better performance.

Audio Weaver also supports a single heap design which combines FAST, FASTB, and SLOW. This is for processors which have a unified flat memory space, like an Arm Cortex-A[^7].

Memory allocation from the Audio Weaver heaps is via the function

void *awe_fwMalloc(UINT32 size, UINT32 heapIndex, INT32 *retVal);

You specify the SIZE in bytes and which heap to allocate from via heapIndex. The function returns a pointer to the allocated memory as a void * or upon failure returns an error code in retVal. There is a lot of flexibility in the heapIndex. You can specify a single heap, AWE_HEAP_FAST, or a hierarchy of heaps like AWE_HEAP_FAST2SLOW. This first tries to allocate in FAST. If this is full, then it tries FASTB. And finally, if this is full, it attempts to allocate in SLOW.

The AWECore library can be configured at initialization to perform aligned memory allocations. This ensures that all wires buffers and array variables are aligned. Here are typical settings:

Processor Alignment (bytes)

X86 16 Arm Cortex-A 16 SHARC+ 16 SHARC FX 4 Arm Cortex-M 4 HiFi 4 16 HiFI 5 16 Hexagon 16 CEVA-X2 16 TI C6x 16 TI C7x 32

The alignment settings may change from build-to-build. The alignment settings of the currently connected target are shown on the Server. See Figure 7.

Audio Weaver does not support freeing individual blocks of memory. We avoid this because it leads to memory fragmentation and is not a good choice for deeply embedded systems. When a system is destroyed, Audio Weaver frees all heap memory and sets the contents to zero in preparation for the next design. You never have to free() memory allocated from the Audio Weaver heaps.

Modules are permitted to do memory allocation themselves outside of the Audio Weaver heaps. This often occurs in you are using C++ code or if you are wrapping an IP library that does its own allocation. Note that this "hidden" memory won't be visible to Audio Weaver and won't be captured by its profiling features. Also keep in mind that you have to manually free() memory when the system is destroyed. This is usually handled in your module's Set() function when MASK == 0. See Section 4.5.6.7.

Module MATLAB Functions

Every module in Audio Weaver has associated MATLAB code which describes the module's capabilities and variables. This is implemented in Audio Weaver as an @awe_module class object. This section follows the ScalerDB module and shows relevant parts of the code. The ScalerDB module is contained in the file scaler_db_module.m.

scaler_db_module.m Walk Through

The entry point of this function is referred to as the MATLAB Constructor. It creates and returns an @awe_module object which contains the ScalerDB module. The top of the function contains the standard MATLAB function header:

function M=scaler_db_module(NAME,NUMPINS)

% M=scaler_db_module(NAME, NUMPINS)

% Creates a dB scaler for use with the Audio

% Weaver environment. The scaler has a single multichannel input and scales

% all channels by the same value.

%

% Arguments:

% NAME - name of the module.

% NUMPINS - Optional: number of inputs. Default = 1 input, 1 output.

% The ith input pin is then scaled and placed in the ith output

% pin. In all cases, the same scale factor is applied to all values.

% Copyright 2007. DSP Concepts, Inc. All Rights Reserved.

% Author: Paul Beckmann

% AudioWeaverModule [This tag makes it appear under awe_help]

% ----------------------------------------------------------------------

% Create the low-level object with render variables

% ----------------------------------------------------------------------

if (nargin < 2) || isempty(NUMPINS)

NUMPINS=1;

end

if NUMPINS < 1

error('ScalerDB module requires at least 1 input pin!');

end

The first argument to every Audio Weaver module function is the NAME of the module in the system. Then there is an optional argument (same as arguments from Section 4.1) which indicates how many input pins the module should have. The module then sets default arguments for NUMPINS and checks that it is a positive integer.

The next part of the code creates the @awe_module object and adds arguments.

M=awe_module('ScalerDB', 'Gain control with dB units and no smoothing');

add_argument(M, 'numPins','int', NUMPINS, 'const', 'number of input and output pins');

if (nargin == 0)

return;

end

The function returns if (nargin == 0) which means that no arguments, not even the NAME, was supplied to the function. This is provided so that Designer can easily query how many arguments a module takes without going through the entire instantiation process. With this code, Designer is able to present numPins as an argument that the user can edit:

The string 'ScalerDB' is the class name of the module and plays a vitally important role. This must be unique within the Audio Weaver module class name space. We recommend that you use your company name to generate unique names, like 'MyCompanyScalerDB'. The class name must be a valid C variable name and will be used to generate a variety of file names and variable names.

Next the name of the module is set based on the first argument NAME. The module also provides a defaultName which is used to generate the name when the module is dragged out in Designer. The defaultName is 'ScalerDB' and the first instance will be named "ScalerDB1", the second instance "ScalerDB2", and so forth.

M.name=NAME;

M.defaultName = 'ScalerDB';

M.processFunc=@scaler_db_process;

M.freqRespFunc=@scaler_db_freq_response;

M.testHarnessFunc = @test_scaler_db;

The module is then marked as deprecated. That means that there are newer modules which perform the same function. (Deprecated modules can continue to be used but are drawn in grey on the Designer canvas.)

M.isDeprecated = 1;

Input and output pins are then added to the module. NUMPINS inputs and NUMPINS outputs are added.

PT=new_pin_type([], [], [], 'float', []);

add_pin(M, 'input', 'in', 'Input signal', PT, NUMPINS);

add_pin(M, 'output','out', 'Output signal', PT, NUMPINS);

The new_pin_type.m function returns a structure, PT, which describes the properties of the pin. It specifies what wire properties the module can support. The arguments to this function are:

PT = new_pin_type(NUMCHANNELS, BLOCKSIZE, SAMPLERATE, DATATYPE, ISCOMPLEX);

NUMCHANNELS, BLOCKSIZE, and SAMPLERATE specify the properties of wires that can be connected to the pin. Audio Weaver Designer automatically validates connections when the system is built. All three properties use the same format. For example, the NUMCHANNELS you can express the following:

[] -- the empty matrix indicates that there are no constraints placed on the number of channels.

[N] -- a single value indicates that this module only supports N channels.

[M N] -- indicates that the module supports M to N channels. (Note, this is a row vector.)

[A; B; C; D] -- indicates that the module supports A, B, C, or D channels. (Note, this is a column vector.)

[M N STEP] -- indicates that the module supports, M, M+STEP, M+2*STEP, ..., N channels.

There is even more flexibility in the range constraints and this is fully described in the Audio Weaver Module Developers Guide.

DATATYPE is a string with one of these values:

'fract32' -- 32-bit fractional representation. Numbers in the range [-1 +1).

'int' -- signed 32-bit integers. Numbers in the range [-(2^31) (2^31)-1].

'float' -- single precision floating-point.

'*32' -- can be any 32-bit data type.

ISCOMPLEX indicates whether the module can support complex data.

0 = real data (the default)

1 = complex data

[] = real or complex

The ScalerDB module sets:

PT = new_pin_type([], [], [], 'float', []);

Thus, this module supports any number of channels, any block size, any sample rate, floating-point data only, and real data or complex data.

Next in scaler_db_module.m, two variables are added.

% ----------------------------------------------------------------------

% Add the target-specific state variables

% ----------------------------------------------------------------------

%Interface variable: Gain in DB

add_variable(M, 'gainDB', 'float', 0, 'parameter', 'Gain in dB.');

M.gainDB.range=[-100 100];

M.gainDB.units='dB';

%Hidden State Variable: Linear equivalent of gainDB

add_variable(M, 'gain', 'float', 1, 'derived', 'Gain in linear units.', 1);

M.gain.units='linear';

"gainDB" is a floating-point variable. The default value is 0. It is a parameter which can be set by the user. There is also a short description ("Gain in dB") which will be used throughout the generated code and documentation. Lastly, the units for this variable are set to 'dB'. This string is used to annotate inspectors.

This is then repeated for the "gain" variable. The main difference is that the module is marked as hidden and won't be visible to the user.

Each variable added to the MATLAB code is automatically inserted in the module instance structure. Recall that ModScalerDB.h contains:

typedef struct _awe_modScalerDBInstance

{

ModuleInstanceDescriptor instance;

FLOAT32 gainDB; // Gain in dB.

FLOAT32 gain; // Gain in linear units.

} awe_modScalerDBInstance

The next part of the MATLAB function specifies where to find the C code for the module's Process() and Set() functions. In Audio Weaver, you specify code fragments and then ModScalerDB.c and ModScalerDB.h are automatically generated.

awe_addcodemarker(M, 'processFunction', 'Insert:InnerScalerDB_Process.c');

awe_addcodemarker(M, 'setFunction', 'Insert:InnerScalerDB_Set.c');

This code was shown earlier in Figure 34 and Section 4.3.4.

The next part of the MATLAB code indicates that the module can perform in-place processing. That is, the same wire buffer can be used for the input and output of the module.

M.wireAllocation = 'across';

If the module cannot support in-place processing, then this should be set to 'distinct' (the default).

Commands are then added which define the module's inspector.

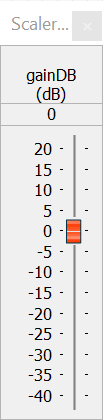

M.gainDB.guiInfo.controlType = 'slider';

M.gainDB.range = [-40 20 0.1];

add_control(M, 'gainDB');

The variable "gainDB" is exposed on the inspector. The range of the slider is -40 to +20 with a step size of 0.1. The inspector for this module is shown below.

The final lines of code specify where the module appears in the Module Browser and how it is drawn on the canvas. A 32x32 pixel custom bitmap is supplied for the Module Browser and a "triangle" shape with "dB" as a legend is used for the canvas. It is also possible to specify a custom SVG file.

M.moduleBrowser.path = 'Gains';

M.moduleBrowser.image = '../images/ScalerDB.bmp';

M.moduleBrowser.searchTags = 'Scaler Volume Multiply';

M.shapeInfo.basicShape = 'triangle';

M.shapeInfo.legend = 'dB';

ScalerDB MATLAB Set Function

The function scaler_db_set.m implements the MATLAB version of the module's set function. It is not absolutely required, but is used by DSP Concepts' regression test framework. This function translates the dB gain to its linear equivalence:

function M = scaler_db_set(M)

M.gain = undb20(M.gainDB);

return;

This set function is called when the module is instantiated or if the gainDB variable is changed while in Design mode.

ScalerDB MATLAB Processing Function

This is also an optional function and it implements the MATLAB version of the module's process function. It is used by DSP Concepts' regression test framework.

function [M, WIRE_OUT]=scaler_db_process(M, WIRE_IN)

WIRE_OUT=cell(size(WIRE_IN));

for i=1:length(WIRE_OUT)

WIRE_OUT{i}=WIRE_IN{i}*M.gain;

end

return;

Commonly Used MATLAB Functions

The 3 most commonly used MATLAB module functions -- Constructor, Set, and PreBuild, are described in this section. Less frequently used functions are described in Section 4.5.5.

Constructor

There is no specific constructor, but we refer to the _module.m function as the "constructor" since it returns the module instance object. Required.

setFunc

Computes derived variables based on tunable parameters. Optional.

preBuildFunc

Used by a module to dynamically modify the output wire properties when the system is built. If the wires are unchanged (like the scaler_db_module.m), then this function is not needed since the base class propagates wire information unchanged by default. Examples of when you need to define a preBuildFunc:

Downsampler -- the output block size and sample rate are changed.

function M = downsampler_prebuild_func(M)

blockSize = M.inputPin{1}.type.blockSize;

if (rem(blockSize, M.D) ~= 0)

error('The downsample factor D does not evenly divide the blockSize');

else

newBlockSize = blockSize/M.D;

end

M.outputPin{1}.type.sampleRate=M.inputPin{1}.type.sampleRate/M.D;

M.outputPin{1}.type.numChannels=M.inputPin{1}.type.numChannels;

M.outputPin{1}.type.dataType=M.inputPin{1}.type.dataType;

M.outputPin{1}.type.blockSize=newBlockSize;

return;

TypeConvert -- the output data type is changed and an internal variable is set based on the input data type.

function [M, WIRE_OUT] = type_conversion_prebuild(M)

% Detect the input dataType

switch(M.inputPin{1}.type.dataType)

case 'float'

M.inputType = 0;

case 'fract32'

M.inputType = 1;

case 'int'

M.inputType = 2;

case 'fract16'

M.inputType = 3;

otherwise

error('Unsupported input dataType');

end

switch(M.outputType)

case 0

outputTypeStr = 'float';

case 1

outputTypeStr = 'fract32';

case 2

outputTypeStr = 'int';

case 3

outputTypeStr = 'fract16';

otherwise

error('Unknown outputType');

end

M.outputPin{1}.type = M.inputPin{1}.type;

M.outputPin{1}.type.dataType=outputTypeStr;

M.outputPin{1}.type.dataTypeRange={outputTypeStr};

return;

You also need to define a preBuildFunc if the sizes of module variables change based on the wire information. An example is the Biquad filter where the preBuildFunc sets the size of the state variable based on the number of channels.

function M=biquad_prebuild_func(M)

% Set the size of the state variables based on the number of channels.

M.state.size=[2 M.inputPin{1}.type.numChannels];

M.state=zeros(2, M.inputPin{1}.type.numChannels);

M.outputPin{1}.type=M.inputPin{1}.type;

return;

Less Frequently Used MATLAB Functions

The @awe_module class has many methods that can be defined. Many of these are only used in rare cases when a module has non-standard requirements.

postBuildFunc

Rarely used. This is called if the module has to perform a specific function after all modules have been created during the build process. For example, the param_set_module.m uses this to locate the controlled module.

propagateChannelNamesFunc

Propagates custom channel name information. The base class function simply copies input names to output names. Define this if you need custom behavior, like the Router module.

propagateDelayFunc

Propagates custom delay information. The base class function simply copies the input delay to the output. Define this if your module specifically inserts delay, like the Delay module.

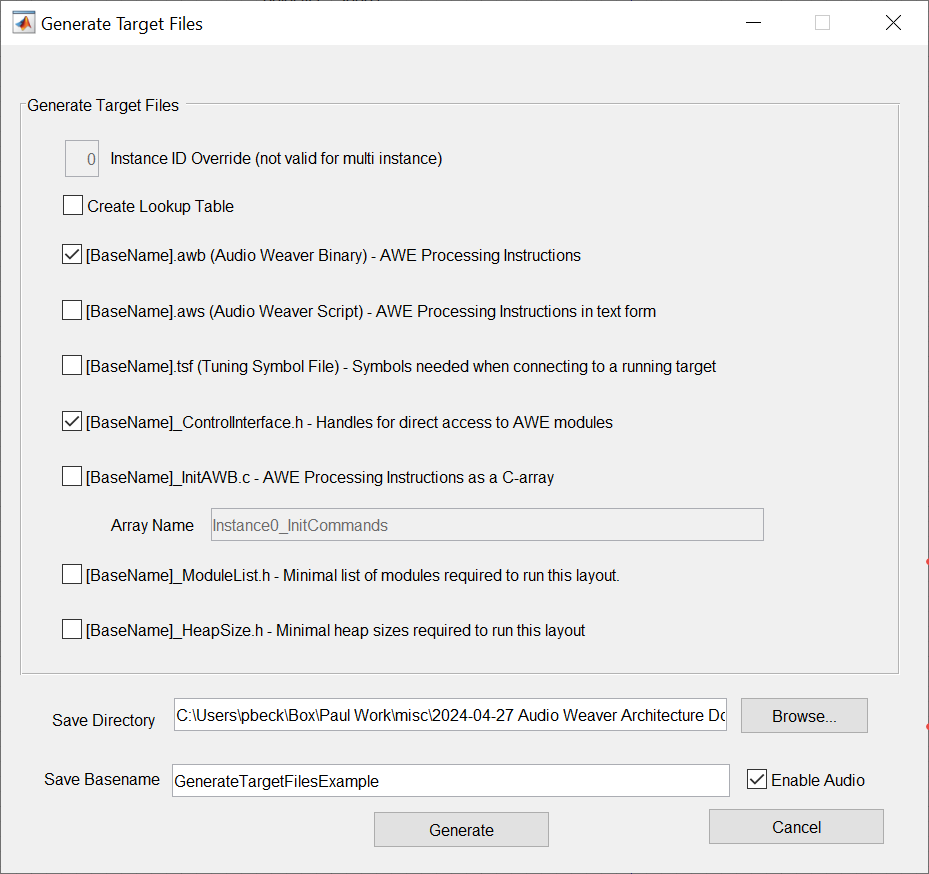

textLabelFunc